Test: Process- 2 - Computer Science Engineering (CSE) MCQ

20 Questions MCQ Test GATE Computer Science Engineering(CSE) 2025 Mock Test Series - Test: Process- 2

Consider the following code fragment:

if (fork() == 0)

{ a = a + 5; printf("%d,%d ", a, &a); }

else { a = a –5; printf("%d, %d ", a, &a); }

Let u, v be the values printed by the parent process, and x, y be the values printed by the child process. Which one of the following is TRUE?

if (fork() == 0)

{ a = a + 5; printf("%d,%d ", a, &a); }

else { a = a –5; printf("%d, %d ", a, &a); }

The atomic fetch-and-set x, y instruction unconditionally sets the memory location x to 1 and fetches the old value of x in y without allowing any intervening access to the memory location x. consider the following implementation of P and V functions on a binary semaphore .

void P (binary_semaphore *s) {

unsigned y;

unsigned *x = &(s->value);

do {

fetch-and-set x, y;

} while (y);

}

void V (binary_semaphore *s) {

S->value = 0;

}

Q. Which one of the following is true?

unsigned y;

unsigned *x = &(s->value);

do {

fetch-and-set x, y;

} while (y);

}

void V (binary_semaphore *s) {

S->value = 0;

}

| 1 Crore+ students have signed up on EduRev. Have you? Download the App |

Three concurrent processes X, Y, and Z execute three different code segments that access and update certain shared variables. Process X executes the P operation (i.e., wait) on semaphores a, b and c; process Y executes the P operation on semaphores b, c and d; process Z executes the P operation on semaphores c, d, and a before entering the respective code segments. After completing the execution of its code segment, each process invokes the V operation (i.e., signal) on its three semaphores. All semaphores are binary semaphores initialized to one. Which one of the following represents a deadlockfree order of invoking the P operations by the processes?

A shared variable x, initialized to zero, is operated on by four concurrent processes W, X, Y, Z as follows. Each of the processes W and X reads x from memory, increments by one, stores it to memory, and then terminates. Each of the processes Y and Z reads x from memory, decrements by two, stores it to memory, and then terminates. Each process before reading x invokes the P operation (i.e., wait) on a counting semaphore S and invokes the V operation (i.e., signal) on the semaphore S after storing x to memory. Semaphore S is initialized to two. What is the maximum possible value of x after all processes complete execution?

A certain computation generates two arrays a and b such that a[i]=f(i) for 0 ≤ i < n and b[i]=g(a[i]) for 0 ≤ i < n. Suppose this computation is decomposed into two concurrent processes X and Y such that X computes the array a and Y computes the array b. The processes employ two binary semaphores R and S, both initialized to zero. The array a is shared by the two processes. The structures of the processes are shown below.

Process X: Process Y:

private i; private i;

for (i=0; i < n; i++) { for (i=0; i < n; i++) {

a[i] = f(i); EntryY(R, S);

ExitX(R, S); b[i]=g(a[i]);

} }

Q. Which one of the following represents the CORRECT implementations of ExitX and EntryY?

Three concurrent processes X, Y, and Z execute three different code segments that access and update certain shared variables. Process X executes the P operation (i.e., wait) on semaphores a, b and c; process Y executes the P operation on semaphores b, c and d; process Z executes the P operation on semaphores c, d, and a before entering the respective code segments. After completing the execution of its code segment, each process invokes the V operation (i.e., signal) on its three semaphores. All semaphores are binary semaphores initialized to one. Which one of the following represents a deadlock-free order of invoking the P operations by the processes?

A shared variable x, initialized to zero, is operated on by four concurrent processes W, X, Y, Z as follows. Each of the processes W and X reads x from memory, increments by one, stores it to memory, and then terminates. Each of the processes Y and Z reads x from memory, decrements by two, stores it to memory, and then terminates. Each process before reading x invokes the P operation (i.e., wait) on a counting semaphore S and invokes the V operation (i.e., signal) on the semaphore S after storing x to memory. Semaphore S is initialized to two. What is the maximum possible value of x after all processes complete execution?

Fetch_And_Add(X,i) is an atomic Read-Modify-Write instruction that reads the value of memory location X, increments it by the value i, and returns the old value of X. It is used in the pseudocode shown below to implement a busy-wait lock. L is an unsigned integer shared variable initialized to 0. The value of 0 corresponds to lock being available, while any non-zero value corresponds to the lock being not available.

AcquireLock(L){

while (Fetch_And_Add(L,1))

L = 1;

}

ReleaseLock(L){

L = 0;

}

This implementation

A thread is usually defined as a "light weight process" because an operating system (OS) maintains smaller data structures for a thread than for a process. In relation to this, which of the following is TRUE?

Consider the methods used by processes P1 and P2 for accessing their critical sections whenever needed, as given below. The initial values of shared boolean variables S1 and S2 are randomly assigned.

Method Used by P1

while (S1 == S2) ;

Critica1 Section

S1 = S2;

Method Used by P2

while (S1 != S2) ;

Critica1 Section

S2 = not (S1);

Q. Which one of the following statements describes the properties achieved?

The enter_CS() and leave_CS() functions to implement critical section of a process are realized using test-and-set instruction as follows:

void enter_CS(X)

{

while test-and-set(X) ;

}

void leave_CS(X)

{

X = 0;

}

Q. In the above solution, X is a memory location associated with the CS and is initialized to 0. Now consider the following statements: I. The above solution to CS problem is deadlock-free II. The solution is starvation free. III. The processes enter CS in FIFO order. IV More than one process can enter CS at the same time. Which of the above statements is TRUE?

Consider a computer C1 has n CPUs and k processes. Which of the following statements are False?

The P and V operations on counting semaphores, where s is a counting semaphore, are defined as follows:

P(s) : s = s - 1;

if (s < 0) then wait;

V(s) : s = s + 1;

if (s <= 0) then wakeup a process waiting on s;

Assume that Pb and Vb the wait and signal operations on binary semaphores are provided. Two binary semaphores Xb and Yb are used to implement the semaphore operations P(s) and V(s) as follows:

P(s) : Pb(Xb);

s = s - 1;

if (s < 0) {

Vb(Xb) ;

Pb(Yb) ;

}

else Vb(Xb);

V(s) : Pb(Xb) ;

s = s + 1;

if (s <= 0) Vb(Yb) ;

Vb(Xb) ;

The initial values of Xb and Yb are respectively

A process executes the following code

for (i = 0; i < n; i++) fork();

The total number of child processes created is

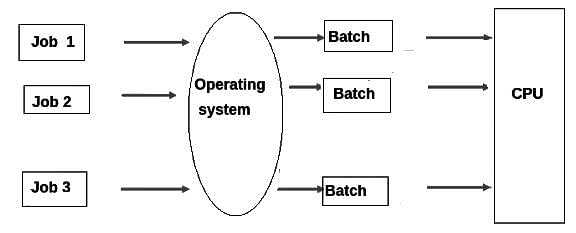

Which of the following types of operating systems is non- interactive?

Consider the following statements about user level threads and kernel level threads. Which one of the following statement is FALSE?

The program in the operating system that does processor management is called _______.

Two processes, P1 and P2, need to access a critical section of code. Consider the following synchronization construct used by the processes:Here, wants1 and wants2 are shared variables, which are initialized to false. Which one of the following statements is TRUE about the above construct?v

/* P1 */

while (true) {

wants1 = true;

while (wants2 == true);

/* Critical

Section */

wants1=false;

}

/* Remainder section */

/* P2 */

while (true) {

wants2 = true;

while (wants1==true);

/* Critical

Section */

wants2 = false;

}

/* Remainder section */

|

55 docs|215 tests

|

|

55 docs|215 tests

|