Difference between Multiprogramming, Multitasking, Multithreading & Multiprocessing

Multiprogramming, Multitasking, Multithreading & Multiprocessing

Modern operating systems provide different forms of concurrency and parallelism to improve resource utilisation and responsiveness. The following sections define and compare four commonly used terms in operating systems: multiprogramming, multiprocessing, multitasking and multithreading. Each term is explained with how it works, examples, advantages and typical use-cases. Keywords used in this chapter include process, thread, CPU, context switch, time-sharing, parallelism and concurrency.

Multiprogramming

Definition: Multiprogramming is the technique of keeping several programs (jobs or processes) in main memory so that the CPU always has one to execute. The operating system arranges to execute parts of one program, then parts of another, so that the CPU is rarely idle.

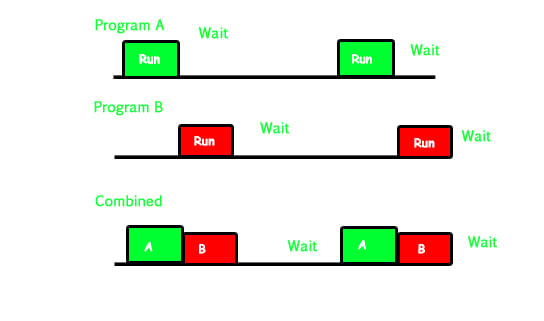

How it works: When multiple jobs are waiting for execution but main memory is limited, the OS places them in a job pool. The CPU selects a job from the job pool, loads it into memory and executes it. If the running job performs I/O or is otherwise blocked, the OS switches the CPU to another ready job. This switching between jobs to keep the CPU busy is called context switching.

Non-multiprogrammed system (contrast):

- When a running job performs I/O, the CPU becomes idle until that job returns; other ready jobs must wait despite available CPU time.

- This leads to poor CPU utilisation and long waiting times for other jobs.

Multiprogrammed system (behaviour):

- As soon as a job becomes blocked (for example, waiting for I/O), the OS selects another job from the job pool and gives it the CPU.

- The blocked job continues its I/O while the CPU executes other jobs, so CPU idle time is minimised.

- The goal of multiprogramming is to keep the CPU as busy as possible when there are ready processes.

Example: Program A runs, then goes to waiting state for an I/O operation; meanwhile Program B is loaded and executed so the CPU is not wasted.

Advantages:

- Higher CPU utilisation compared to non-multiprogrammed systems.

- More jobs make progress in a shorter elapsed time overall.

Limitations:

- Requires efficient scheduling and context switching, which add overhead.

- Memory must be managed so multiple jobs can reside in main memory without conflict.

Multiprocessing

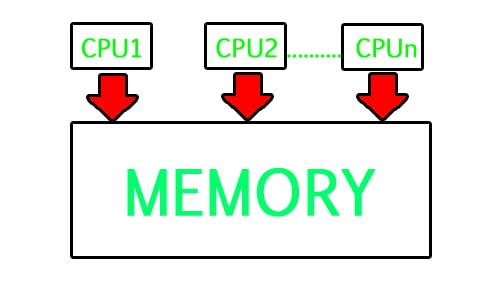

Definition: Multiprocessing refers to a computer system with two or more CPUs (processors) that can execute multiple processes simultaneously. It is a hardware capability: the system has more than one processing unit.

How it works: Multiple processors share the system bus, memory and some peripherals. With two or more processors available, independent processes can run on different processors at the same time; this is true parallel execution (not just the illusion of parallelism).

Working example:

- If processes P1, P2, P3 and P4 are ready, a multi-processor system can execute several of them simultaneously by assigning each to a different CPU.

- A dual-core processor can run two processes in parallel; a quad-core can run four concurrently (subject to other system constraints).

Types (brief):

- Symmetric Multiprocessing (SMP): All processors are peers; any processor can run any process and access all memory and I/O devices.

- Asymmetric Multiprocessing (AMP): Processors may have specialised roles (for example a master handles scheduling), though modern systems usually use SMP.

Why use multiprocessing:

- To complete more work in less time by true parallel execution.

- Improved throughput and responsiveness for compute-intensive workloads.

- Increased reliability: if one processor fails, others can continue (system slows but does not necessarily halt).

Notes: Multiprocessing refers to hardware (multiple CPU units). Software (the operating system and applications) must be written to exploit multiple processors effectively.

Difference between Multiprogramming and Multiprocessing

- Multiprogramming is a software/OS technique to keep the CPU busy by switching between several programs on a single processor.

- Multiprocessing is a hardware property: multiple CPUs allow true parallel execution of processes.

- Multiprogramming achieves concurrency by context switching on one CPU; multiprocessing achieves parallelism by executing processes on different CPUs simultaneously.

- A system can be both multiprogrammed and multiprocessor: several programs may be resident in memory and the OS may schedule them across multiple CPUs.

Multitasking

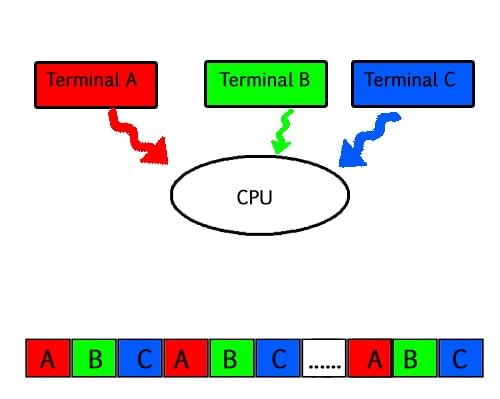

Definition: Multitasking is the capability of an operating system to allow multiple tasks (programs, processes, or threads) to make progress during the same period of time. In single-CPU systems this is achieved by time-sharing; in multi-CPU systems this can be combined with parallelism.

How it works: Multitasking is typically implemented by time-sharing. Each process gets a small time slice (time quantum) during which it executes. After its quantum expires, the OS performs a context switch and gives the CPU to another ready process. To users this appears as if multiple tasks are running simultaneously.

Key concepts:

- Time quantum (time slice): The fixed short time a process is allowed to run before being preempted.

- Preemptive multitasking: The OS forcibly takes the CPU away from a running process after its quantum expires (common in modern OSes).

- Non-preemptive (cooperative) multitasking: A process yields control voluntarily; less common in general-purpose OSes because a misbehaving process can block others.

Example: While editing a document, the same system plays music and fetches web pages. Each application receives CPU time in quick succession, giving the illusion of simultaneous execution.

Multitasking requirements:

- Multiprogramming (multiple ready programs in memory) and a scheduler to allocate time slices.

- Fast context switching to make the illusion of simultaneity convincing to users.

Advantages:

- Good responsiveness for interactive systems.

- Fair sharing of CPU among processes.

Limitations:

- Context switching overhead increases with the number of tasks and the frequency of switching.

- If time quantum is too short, overhead dominates; if too long, responsiveness suffers.

Multithreading

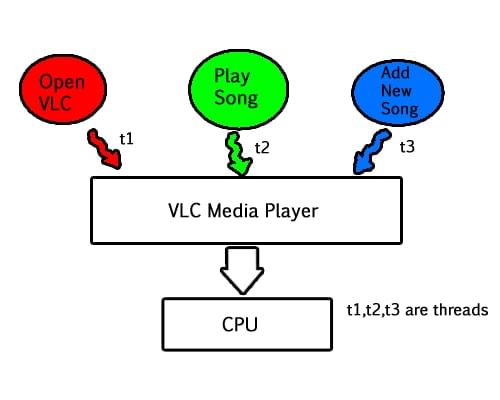

Definition: A thread is the smallest sequence of programmed instructions that can be managed independently by a scheduler. Multithreading is the ability of a single process to contain and run multiple threads concurrently within the same process address space.

How it works: Threads inside the same process share the process's code, data and open files, but each thread has its own program counter, stack and CPU registers. Multithreading allows a process to perform multiple activities concurrently without creating separate processes for each activity.

Typical examples:

- A web server: one thread listens for new client connections while each client request is handled by a separate thread. This allows the server to handle many requests concurrently.

- A media player (e.g. VLC): one thread handles user interface events, another thread decodes audio, and another reads from the disk or network. The UI remains responsive while decoding proceeds.

User-level vs kernel-level threads (brief):

- User-level threads: Managed by a user library; context switching between them does not require kernel involvement, so switching is fast but the kernel may see only one process thread and block all user threads if one blocks on a kernel call.

- Kernel-level threads: Managed by the kernel; each thread can be scheduled independently by the OS, enabling true concurrent execution on multiple CPUs but with higher switching overhead.

Concurrency and parallelism:

- Concurrency: Multiple threads make progress in overlapping time intervals (may be on a single CPU using time-sharing).

- Parallelism: Multiple threads execute at the same time on different CPUs (requires multiprocessing hardware).

Synchronization issues:

- Because threads share memory, access to shared data must be controlled to avoid race conditions. Common synchronization mechanisms include mutexes (locks), semaphores and condition variables.

- The critical section is the part of code that accesses shared resources and must be protected to preserve correctness.

Advantages of multithreading:

- Improved responsiveness: if one thread blocks, other threads in the same process can continue.

- Lower overhead than multiple processes: threads share resources so creating and switching threads is usually cheaper than processes.

- Better utilisation of multiprocessor systems when combined with multiprocessing.

Limitations and care points:

- Programming with threads requires careful design to avoid deadlocks, race conditions and priority inversion.

- Shared memory programming can introduce subtle bugs if synchronization is incorrect.

Comparison Summary

- Multiprogramming: Software technique to keep a single CPU busy by holding multiple programs in memory and switching among them (context switching). Focus: CPU utilisation.

- Multitasking: Time-sharing extension of multiprogramming aimed at responsiveness and interactive use. Focus: short time slices and preemptive scheduling to give the appearance of parallelism.

- Multithreading: Multiple threads within the same process that share resources but run independently; useful for decomposing tasks and improving responsiveness and throughput.

- Multiprocessing: Hardware feature: multiple CPUs or cores that enable true parallel execution of processes and/or threads. Focus: increased raw computational throughput and reliability.

- A system can combine these concepts: for example, a multiprocessor machine running a multitasking OS with multiprogrammed memory management and applications that use multiple threads.

Practical Applications and When to Use Each

- Use multiprogramming techniques when CPU utilisation needs to be maximised on single-CPU systems or when many jobs must be kept ready for execution.

- Use multitasking (time-sharing) for interactive systems such as desktop operating systems where responsiveness is important.

- Use multithreading inside applications that have multiple independent or overlapping activities (e.g. servers, GUIs, media players) to keep programs responsive and to exploit multiple cores.

- Use multiprocessing in compute-intensive environments (scientific computing, servers, databases) where parallel execution across cores/processors improves throughput and performance.

Conclusion

All four concepts - multiprogramming, multitasking, multithreading and multiprocessing - help systems run more work in less time or provide a more responsive experience to users. They operate at different levels (software scheduling versus hardware resources) and can be combined: an OS can be multiprogrammed and multitasking on a multiprocessing machine while applications use multithreading to exploit available processors. Understanding the distinctions and interactions among these approaches is essential for designing and optimising operating systems and applications.

FAQs on Difference between Multiprogramming, Multitasking, Multithreading & Multiprocessing

| 1. What is multiprogramming? |  |

| 2. What is multitasking? |  |

| 3. How is multithreading different from multitasking? |  |

| 4. What are the benefits of multithreading? |  |

| 5. How does multiprocessing differ from multiprogramming? |  |

| Explore Courses for Computer Science Engineering (CSE) exam |  |

| Get EduRev Notes directly in your Google search |  |