Hardware & Software | Cyber Olympiad for Class 9 PDF Download

A computer is an electronic device that can perform activities that involve mathematical, logical, and graphical manipulations. Generally, the term computer is used to describe a collection of devices that function together as a system. It performs the following three operations in sequence.

- Receives data and instructions from the input device.

- Processes the data as per the given instructions.

- Provides the result as output in a desired form on the output device.

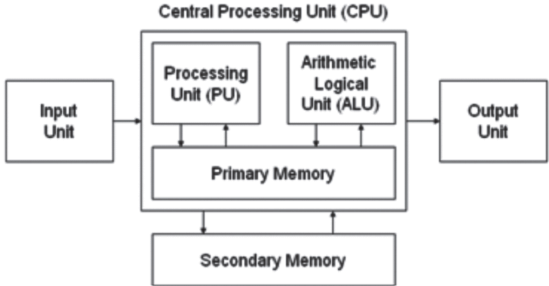

Block Diagram of a Computer

Modern personal computers usually contain the following components:

- Input Unit: Reads information from input media and enters it into the computer.

- Output Unit: Displays information to the user.

- Central Processing Unit: It carries out the instructions of a computer program. It reads and executes program instructions. Hence, it is considered the brain of a computer. The CPU consists of a Storage or Memory Unit, Arithmetic Logic Unit (ALU), and Control Unit (CU).

Block Diagram of Computer

Block Diagram of Computer

(a) Storage or Memory Unit: It is also known as the primary storage or main memory. It stores data, program instructions, internal results, and final output temporarily before it is sent to an appropriate output device. It consists of thousands of cells called - storage locations. These cells can store binary digits called bits. These bits are used to store instructions and data by their combinations.

(b) Arithmetic Logic Unit (ALU): An arithmetic-logic unit also known as ALU is the part of a CPU that carries out arithmetic and logic operations when instructions are given. Some processors have ALU divided into two units, an arithmetic unit (AU) and a logic unit (LU). Some processors contain more than one AU. Typically, the ALU has direct access to the processor controller, main memory, and input/output devices.

Inputs and outputs flow along an electronic path called a bus. The input is a set of instructions that contains an operation code. The operation code tells the ALU what task to carry out. The output is a result that is shown or placed in an output device indicating the operation was performed. It is the unit where all arithmetic operations (addition, subtraction, etc.) and logical functions such as true or false, male or female are performed. Once data are fed into the main memory from the input devices, they are held and transferred as needed by ALU where processing takes place.

No processing occurs in primary storage. Intermediately generated results in ALU are temporarily placed in memory until needed later. Data may move from primary memory to ALU and back again to store many times before the process is finalized.

(c) Control Unit: It acts as a central nervous system and ensures that the information is stored correctly and the program instructions are followed in proper sequence. The data are selected. from the memory as and when necessary. It also coordinates all the input and output devices of a system. A control unit tells the computer’s logic unit, memory, and both input and output devices how to respond to instructions received from a program. Examples of devices that utilize control units include Central Processing Unit (CPUs) and Graphics Processing Unit (GPUs). A control unit receives input information from the input devices and converts it into control signals, which are then sent to the central processor. The computer’s processor then tells the attached output device how to respond.

Some of the functions carried out by the CU:

- Interprets instructions given as input

- Controls and executes instructions sequentially

- Regulates and controls processor timing

- Sends and receives control signals from Other computer devices

- Carries out multiple tasks, like fetching, decoding, executing, handling, and storing

(d) Secondary Memory: Secondary memory is a computer memory also known as secondary storage. It is non-volatile meaning that its data is not lost even when the power supply to the computer system is turned off. It cannot be processed directly from the main memory. It allows a user to store data that may be instantly and easily retrieved or transported. Some examples of secondary memory devices are magnetic disks like hard drives and floppy disks; optical disks like CDs and DVDs and magnetic tapes.

Classification of Computers

Computers can be classified in various ways depending upon their size, memory capacity, processing speed, etc. Here we are going to discuss the broadly accepted classification of a computer.

Characteristics of A Computer

- Speed: The internal processing speed of a computer is very high.

- Accuracy: Accuracy is one of the most important characteristics. It can do computation with a high amount of precision. it gives faulty results only when faulty instructions are given.

- Versatile: Computers are capable of performing almost any type of task.

- Storage: The computer can store a large amount of data for subsequent manipulation.

- Automation: Once the method or program is given to the computer, it performs the task without human intervention.

- Diligence: A computer works with the same speed, same accuracy, and same efficiency right throughout. It is ideal for repetitive jobs.

Classification on the basis of Function

Analog Computer: An analog computer processes analog data. Analog data is continuous and has an infinite variety of values. These data include temperature, pressure, speed weight, voltage, depth, etc. Analog computers were the first computers to be developed. It provided the basis for the development of digital computers. Analog computers are widely used for specialized engineering and scientific applications, especially for the calculation of analog quantities.

Digital Computer: Most of the computers available today are digital computers. A digital computer employs digits to represent any kind of data. Digital computers process information which is based on the presence or the absence of an electrical charge or binary digits 1 or 0. It can be used to interpret both numeric and alphanumeric data. It can perform arithmetic operations like addition, subtraction, multiplication, division, and also logical operations. Some examples of digital computers are accounting machines and calculators. The results of digital computers are more accurate than those of analog computers.

Hybrid Computers: These computers are a combination of both analog computers and digital computers. Its examples are STD/PCO phone, where one communicates with a person using an analog computer and the rate as charges and pulse rate is measured in the form of digits. measuring heartbeat or ECG system in ICU in a hospital is another example of hybrid computers.

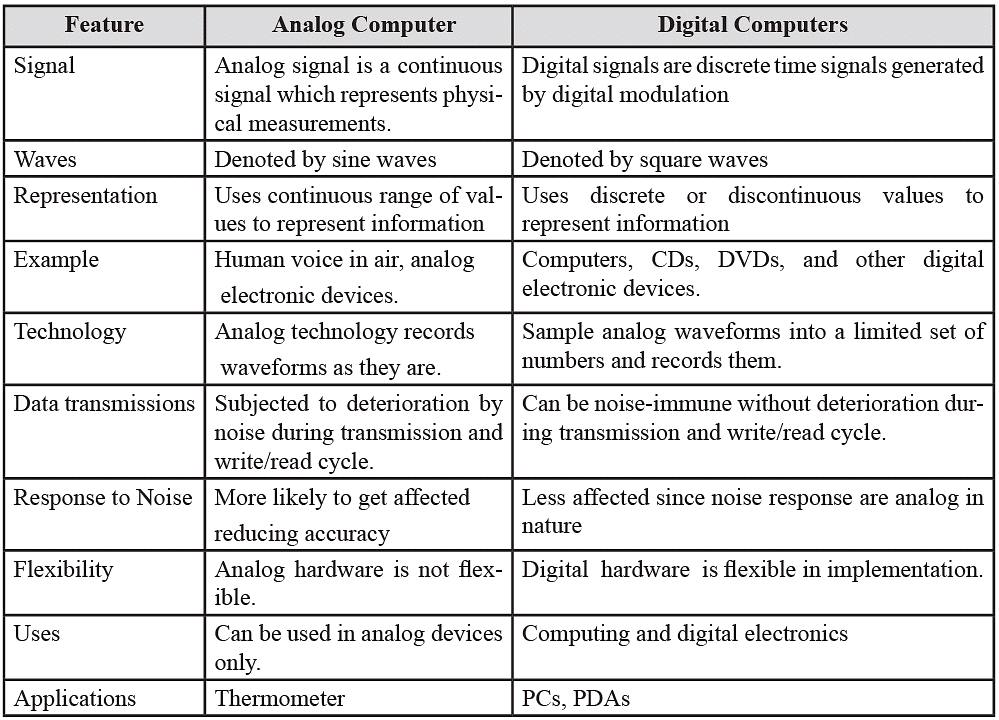

Difference between Analog and Digital Computer

Classification on the basis of Purpose

In a digital computer, classification can be done on the basis of purpose:

- General Purpose computer

- Special Purpose computer

General Purpose Computer

These computers are designed to perform a variety of jobs or applications and are less efficient than special-purpose computers. It is used in banking, sales analysis, PCs, etc.

Special Purpose Computer

These are designed to meet the needs of some special applications. They are designed to perform a single job. Therefore, they execute a task quickly and more efficiently. Programs and instructions are stored permanently in them. They are used in weapon design.

Classification on the basis of Sizes

1. Super Computer

These computers can handle the most complex scientific, statistical applications or programs. It can process trillions of instructions in a second. Its speed is measured in picoseconds. It is also used to forecast weather reports worldwide.

Key features are:

- High technology

- High capacity memory

- Fast processing

- Highly sophisticated technology and

- Cost varies from 1 million to 5 million

Example: PARAM

Disadvantages are:

- Operating a Supercomputer requires highly qualified staff.

- Experts are required for such computer engineering.

- These are sensitive to temperature, humidity, dust, etc.

- Nonportability and large size

2. Mainframe Computer

The CPU of the mainframe computer is connected to more than 100 terminals. It occupies approximately 1000 sq. feet of area. Insurance companies, banks, airline, and railway reservation systems use these computers. The most popular maker of these computers is IBM. Examples of mainframe computers are IBM 1401, ICL 2950/10.

Key features are:

- Smaller size than supercomputers

- Large memory capacity

- Allows networking of up to 100 terminals

- Cost varies from 5-20 lacs.

Examples: PDP-370, IBM 40

Disadvantages are:

- Experts and highly qualified professionals are required to operate it

- Sophisticated technology is required for manufacturing and assembling the computer

3. Mini Computer

Minicomputers are medium-sized computers. They are also known as mid-range computers. They are more reliable and faster than microcomputers. This computer is next in line but offers less than the mainframe in work and performance. These are the computers which are mostly preferred by the small business personnel, colleges, etc.

Key features are:

- Higher processing speed than lowest category computer but slower than supercomputer and mainframe computer

- Portable because of its smaller size

- Memory capacity RAM is up to 128 MB

- Secondary Memory stores 40 GB

- Costs around Rs 50 thousand to 90 thousand

Examples: are PDP-11 and PDP-45.

Disadvantages are:

- Cannot be connected to all hardware devices

- Cannot execute all languages and software

4. Micro Computer

Microcomputers are small, single-user computers that contain a single processor and a few input/ output devices. They are also known as ‘Personal Computers or PCs. These are the smallest computer systems on the basis of size. They are called micro because of the microprocessor it uses to operate. Microcomputers can be grouped into five smaller groups. They are

- Workstations

- Desktops

- Servers

- Laptops

- Notebooks

Key features are:

- Smaller than a mini-computer

- High-speed computer but slower than a mini-computer

- Costs around Rs. 30,000 to 60,000

- Portable

- RAM requires 64 MB to 128 MB

- Limited languages FORTRAN, Basic,

- COBOL, Pascal can be executed

Examples: Uptron, HCL, PCL, Wipro, PCs, HP, PC-AT, PC-XT

5. Desktop Computers

This is also called HOME or Briefcase Computers. Desktop computers are used in the education system and small-scale industries.

Key features are:

- Portable

- High speed–processing processor varies from 80286 to 80586

- Requires RAM ranging from 16 MB to 64 MB

- Internet facility for communication

- Costs around Rs 30,000 to Rs 60,000

Examples: PCL, Wipro, COMPAQ, HP, LEO, SAMSUNG, etc.

Disadvantages are: There execute limited software and languages related to windows.

6. Pocket Computer

Key features are:

- Small in size

- Portable like a digital diary

- Requires RAM maximum up to 1GB

- Disk capacity is 80 GB

Disadvantages are: There are executed on limited software and hardware.

Computer Generations

A generation refers to the state of improvement in the development of a product. This term is also used in different advancements in computer technology. With the stages of each new generation, the circuitry has become smaller and more advanced than that of the previous generation. As a result speed, power, and memory of computers have proportionally increased.

The First Generation (1946-1958, The Vacuum Tube Years)

The first generation of computers was huge, slow, expensive, and often undependable. In 1946, two Americans, Presper Eckert, and John Mauchly built the ENIAC electronic computer which used vacuum tubes. The first-generation computers used vacuum tubes for circuitry and magnetic drums for memory. The ENIAC used thousands of vacuum tubes, which took up a lot of space and gave off a great deal of heat just like light bulbs do. The ENIAC led to other vacuum tube-type computers like the EDVAC (Electronic Discrete Variable Automatic Computer) and the UNIVAC (Universal Automatic Computer).

Features of the first generation:

- Use of vacuum tubes

- Big and clumsy.

- High electricity consumption

- Programming in the mechanical language

- Larger ACS were needed

- A lot of electricity failures occurred

The Second Generation (1959-1964, The Era of the Transistor):

The transistor computer did not last as long as the vacuum tube computer lasted, but it was no less important in the advancement of computer technology. In 1947, three scientists, John Bardeen, William Shockley, and Walter Brattain working at AT&T's Bell Labs invented what could replace the vacuum tubes forever. This invention was the transistor which functions as a vacuum tube and could be used to relay and switch electronic signals.

There were obvious differences between the transistor and the vacuum tube. The transistor was faster, more reliable, smaller, and much cheaper to build than a vacuum tube. One transistor was equivalent to 40 vacuum tubes. A transistor was very cheap to produce, much smaller, and did not produce heat as compared to vacuum tubes.

Features of the second generation:

- Transistors were used

- Core Memory was developed

- Faster than First Generation computers

- First Operating System was developed.

- Programming was in Machine Language and Assembly Language.

- Magnetic tapes and discs were used. The magnetic core technology was used instead of the magnetic drum.

- Computers became smaller in size than the First Generation computers.

- Computers consumed less heat and consumed less electricity.

The Third Generation (1965-1970, Integrated Circuits)

The integrated circuit (IC), sometimes referred to as a semiconductor chip, packs a huge number of transistors onto a single wafer of silicon. Robert Noyce of Fairchild Corporation and Jack Kilby of Texas Instruments independently discovered the amazing attributes of integrated circuits. Placing such large numbers of transistors on a single chip drastically increased the power of a single computer and lowered its cost considerably.

Since the invention of integrated circuits, the number of transistors that can be placed on a single chip has doubled every two years, shrinking both the size and cost of computers even further and further enhancing their power. Instead of punched cards and printouts, keyboards monitors were used to interacting with the operating system. Most electronic devices today use some form of integrated circuit placed on printed circuit boards called a motherboard.

Features of third-generation computers:

- Integrated circuits were developed.

- Power consumption was low.

- SSI & MSI Technology was used.

- High-level languages were used.

The Fourth Generation (1971-Today, The Microprocessor)

This generation can be characterized by the jump to the invention of the microprocessor (a single chip that could do all the processing of the full-scale computer). By putting millions of transistors onto one single chip, more calculations and faster speed could be reached by computers. Ted Hoff, employed by Intel (Robert Noyce's new company) invented a chip of the size of a pencil eraser that could do all the computing and logic work of the computer. The microprocessor was made to be used in calculators, not in computers. It led, however, to the invention of personal computers, or microcomputers.

Properties of Computers

- High speed: Computers can perform routine tasks at a greater speed than human beings. They can perform millions of calculations in seconds.

- Accuracy: Computers are used to perform tasks in a way that ensures accuracy. They never make mistakes.

- Storage: Computers can store a large amount of information. Any item of data or any instruction stored in the memory can be retrieved by the computer at lightning speed.

- Automation: Computers can be instructed to perform complex tasks automatically (which increases productivity).

- Diligence: Computers can perform the same task repeatedly and with the same accuracy without getting tired.

- Versatility: Computers are flexible to perform both simple and complex tasks.

- Cost-effectiveness: Computers reduce the amount of paperwork and human effort, thereby reducing the cost.

Limitations of Computers

A computer can perform better than a human being in speed, memory, and accuracy, but it still has limitations.

Some limitations of a computer are as follows.

- Programmed by humans: Though the computer is programmed to work efficiently, accurately, and fast it is programmed by human beings to do so. Without a program, the computer is nothing. A computer only follows the instructions given by humans. If the instructions are not accurate, the working of the computer will not be accurate.

- Thinking: The computer cannot think on its own. The concept of artificial intelligence shows that the computer can think. But this concept is dependent on a set of instructions provided by human beings.

- Self Care: A computer cannot care for itself like a human. It is dependent on human beings for this purpose.

- Retrieval of memory: A computer can retrieve data very fast but this technique is linear. A human being does not follow this rule. A human mind can think randomly, which a computer machine cannot.

- Feelings: A computer cannot feel about something like a human can. It cannot parallel humans in respect of relations. Humans can feel, think and care but a computer machine cannot. A computer cannot take place of human beings because a computer is always dependent on the cotter.

Software

Programs or instructions have to be fed to computer components into two major areas, namely, hardware and software. Hardware is the machine itself and its various individual equipment. It includes all mechanical, electronic, and magnetic devices such as monitors, printers, electronic circuits, floppy, and hard disks.

As we all know the computer cannot do anything without instructions from the user. In order to do any specific job, you have to give a set of instructions to the computer. This set of instructions is called a computer program. A collection of programs is called software which increases the capabilities of the hardware.

The software guides the computer at every step in carrying out a particular task. The process of software development is called programming. Software and hardware are complementary to each other. Both have to work together to produce meaningful results. Producing software is more difficult and expensive than making hardware.

Types of Software

Computer software is normally classified into two broad categories.

- Application Software

- System software

1. Application Software

It is a set of one or more programs that are designed for the user to carry out operations for a specific application. Application software cannot run on itself but is dependent on system software to execute commands.

Some examples of application software are the following:

- Payroll Software

- Student Record Software

- Inventory Management Software

- Income Tax Software

- Railways Reservation Software

- Microsoft Office Suite Software

- Microsoft Word

- Microsoft Excel

- Microsoft Powerpoint

In later modules, you will learn about MS WORD, Lotus 1-2-3, and dBase III Plus. All these are application software. Another example of application software is a programming language. Among the programming languages, COBOL (Common Business Oriented Language) is more suitable for business applications whereas FORTRAN (Formula Translation) is useful for scientific applications. We will discuss languages in the next section.

System Software

An instruction is a set of programs that has to be fed to the computer for the operation of the computer system as a whole. When you switch on the computer, the programs written in ROM are executed, which activate different units of your computer and make it ready for you to work on it. This set of programs can be called system software.

Therefore, the system software may be defined as a set of one or more programs designed to control the operation of the computer system. System software is a general program designed for performing tasks such as controlling all operations required to move data into and out of the computer.

It communicates with printers, card readers, disks, tapes, etc, and monitors the use of various hardware components like memory, CPU, etc. System software contains programs Written in low-level languages which interact with the hardware at the most basic level. In addition, the system software is essential for the development of application software. System Software allows application packages to be run on the computer with less time and effort. Remember that it is not possible to run application software without system software.

Features of application software are:

- Close to user

- Easy to design

- More interactive

- Slow in speed

- Written in a high-level language

- Easy to understand

- Easy to manipulate and use

The development of system software is a complex task and requires extensive knowledge of computer technology. Computer manufacturers build and supply system software with the computer system. DOS, UNIX, and WINDOWS are some of the widely used system software. Out of these, UNIX is a multi-user operating system whereas DOS and WINDOWS are PC-based. Without system software, it is impossible to operate a computer.

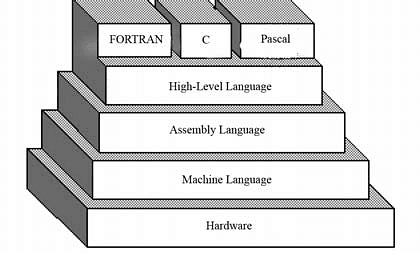

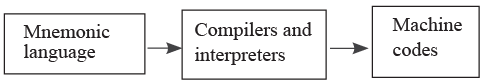

Language

Our computer will not understand any languages like English, Hindi for the transfer of data and instruction. Therefore, there are programming languages specially developed so that you can pass your data and instructions to the computer to do a specific job. Names of some programming languages are FORTRAN, BASIC, COBOL, etc Therefore, instructions or programs are written in a particular language based on the type of job. For example, for scientific applications, FORTRAN and C languages are used. On the other hand, COBOL is used for business applications.

Types of Language

There are two major types of programming languages:

1. Low-Level Languages

The term low level means closeness to the way in which the machine has been built. Low-level languages are machine-oriented and require extensive knowledge of computer hardware and its configuration.

Machine Language

Machine language is the lowest-level programming language. These are languages that are understood by computers. They need not be converted to any form for the computer to understand. Machine languages are in a format that can be easily understood by computers but it is impossible for a human being to understand since it contains only numbers. It is comprised of just two digits 0 and 1 also known as binary digits. Programmers write codes in a higher-level language and convert them to machine language using assemblers or compilers.

For example, a program instruction may look like this:

1011000111101

It is not easy to learn a low-level language because of its difficulty to understand, though computers understand this language very efficiently. It is considered to be the first generation language. It is also difficult to debug the program written in this language.

Advantages

The only advantage is that the program of machine language runs very fast because no translation program is required for the CPU.

Disadvantages

- It is very difficult to program in machine language. The programmer has to know the details of the hardware to write the program.

- The programmer has to remember many codes to write a program, which results in program errors.

- It is difficult to debug the program.

Assembly Language

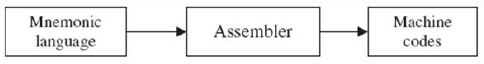

- A computer can numbers and letters. Therefore, some combinations of letters can be used to substitute work on the number of machine codes. Machine languages consist only of numbers and are not impossible for programmers or users to read and write. This is when an assembly language comes into play.

- The assembly language has the same set of commands but it allows the programmers to replace numbers or digits with names and words. For example, the binary-code instruction that means ‘store the contents of the accumulator’ may be represented with the mnemonic STA (st/ore a/ accumulator).

- Machine language and assembly language developed for one computer system will not run on another. A separate assembly language has to be developed for another system. Programmers still use assembly language when speed is essential or when they need to perform an operation that is not possible in a high-level language.

- A set of symbols and letters forms the assembly language and a translator program is required to translate the assembly language to machine language. This translator program is called `assembler'. This language is considered to be a second-generation language.

Advantages

- The symbolic programming of assembly language is easier to understand and saves a lot of time and effort for the programmer.

- It is easy to correct errors and modify program instructions.

- Assembly language has the same efficiency of execution as the machine-level language because this is a one-to-one translator between the assembly language program and its corresponding machine language program.

Disadvantages

One of the major disadvantages is that assembly language is machine-dependent. A program written for one computer might not run on other computers with different hardware configurations.

2. High-Level Languages

You know that assembly language and machine level language require deep knowledge of computer hardware whereas in higher languages a programmer needs to know only the instructions in English words and the logic of the problem irrespective of the type of computer being used. In computer science, a high-level programming language is a programming language with a strong resemblance to the human language. Unlike, machine language it uses natural language elements, is easier to use, or may automate significant areas of computing systems making the process of developing a program much simpler. Some examples include BASIC, FORTRAN, Java, C++, and Pascal.

High-level languages are simple languages that use English and mathematical symbols like +, -, %, / etc. for their program construction.

A high-level language needs to be converted to a machine language for the computer to understand. High-level languages are problem-oriented languages because the instructions are suitable for solving a particular problem. For example, COBOL (Common Business Oriented Language) is mostly suitable for business-oriented language which requires very little processing and produces huge output. There are mathematically oriented languages like FORTRAN (Formula Translation) and BASIC (Beginners All-purpose Symbolic Instruction Code) where very large processing is required.

Thus, a problem-oriented language is designed in such a way that its instruction may be written more like the language of the problem. For example, business persons use business terms and scientists use scientific terms in their respective languages.

Advantages

High-level languages have a major advantage over machine and assembly languages as languages are easy to learn and use. It is because there they are similar to the languages used in our day-to-day life.

Translator

It is a computer program that translates all programming languages into an equivalent different computer language understood by the computer, without losing the functionality of the program. These include translations between high-level and human-readable computer languages such as C++, Java, and COBOL.

Some computer translators include interpreters, compilers, and assemblers.

- If the translator translates a high-level language into another high-level language, it is called a translator or source-to-source compiler. Examples include Haxe, FORTRAN-to-Ada translators, translators, PASCAL-to-C translators, COBOL(DialectA)-to COBOL(DialectB) translators.

- If the translator translates a high-level language into a lower-level language, it is called a compiler. Notice that every language can be either translated into a (Turing-complete) high level or assembly language.

- If the translator translates a high-level language into an intermediate code which will be immediately executed, it is called an interpreter.

- If the translator translates assembly language to machine code, it is called an assembler. Examples include MASM, TASM, and NASM.

Assembler

An assembler translates the symbolic codes of programs of an assembly language into machine language instructions. The symbolic language is translated to the machine code in the ratio of 1:1 symbolic instructions to one machine code instruction. Such types of languages are called low-level languages. The assembler programs

Compiler

Compilers are the translators, which translate all the instructions of a program into machine codes, which can be used again and again. The program, which is to be translated, is called the source program and after translation, the object code is generated. The source program is input to the compiler. The object code is output for the secondary storage device. The entire program will be read by the compiler first to generate the object code. The compiler displays the list of errors and warnings for the statements violating the syntax rules of the language.

Interpreter

Interpreters also come in the group of translators. It helps the user to execute the source program with a few differences as compared to compilers. The source program is just like English statements in both interpreters and compilers. The interpreter generates object codes for the source program. The interpreter reads the program line by line, whereas in compiler, the entire program is read by the compiler, which then generates the object codes. Interpreter directly executes the program from its source code. Due to this, every time the source code should be input to the interpreter. In other words, each line is converted into the object codes. It takes very less time for execution because no intermediate object code is generated.

Relationship between Hardware and Software

- Hardware and software must work together to make a computer produce a useful output.

- The software cannot be utilized without the hardware.

- Hardware cannot operate without software.

- Different software applications can be loaded on hardware to run different jobs.

- The software acts as an interface between the user and the hardware.

|

7 videos|27 docs|69 tests

|

|

Explore Courses for Class 9 exam

|

|