Notes: Deadlock

Introduction of Deadlock in Operating System

A process in an operating system requests and uses resources in a cycle of actions:

- Request a resource

- Use the resource

- Release the resource

Deadlock is a situation in which a set of processes are blocked because each process is holding at least one resource and waiting to acquire a resource held by some other process. Once the involved processes are blocked in this way, none can proceed.

Consider the simple analogy of two trains travelling towards each other on a single-track section. If each train occupies one end of the single track segment and neither can reverse or move off the track, both remain stuck indefinitely. The same situation may occur in a computer system when processes hold resources and wait for resources held by others.

Necessary Conditions for Deadlock

Deadlock can arise only if the following four conditions hold simultaneously. These are necessary conditions; if any one of them is absent, deadlock cannot occur.

- Mutual exclusion: At least one resource is non-shareable; only one process can use it at a time.

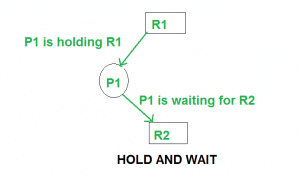

- Hold and wait: A process holding one resource may request additional resources and wait while holding the current ones.

- No preemption: Resources cannot be forcibly taken away from a process; they are released only voluntarily by the process holding them.

- Circular wait: A closed chain of processes exists such that each process holds one or more resources needed by the next process in the chain.

Methods for Handling Deadlock

There are three principal approaches to handling deadlock in operating systems:

- Deadlock prevention or avoidance: Prevent the system from ever entering a deadlock state. Prevention is achieved by ensuring that at least one of the necessary conditions cannot hold; avoidance requires additional information (for example, each process's maximum resource needs) and dynamically ensures the system stays in a safe state.

- Deadlock detection and recovery: Allow deadlocks to occur, detect them when they do, and take actions (preemption, termination, rollback) to recover.

- Ignore the problem: Treat deadlocks as extremely rare and restart or reboot when they occur. This pragmatic approach is used by many general-purpose systems.

Deadlock Prevention and Avoidance

Overview of the Four Conditions

The four conditions that characterise deadlock were listed above. To prevent deadlock one must ensure that the system design or resource-allocation policy negates at least one of these conditions.

Eliminating Mutual Exclusion

In principle, mutual exclusion could be removed by making resources shareable. In practice, many resources (for example, physical devices such as tape drives and printers) are inherently non-shareable. Therefore this condition cannot always be negated.

Eliminating Hold and Wait

Two common policies to eliminate the hold-and-wait condition are:

- A process must request and be allocated all the resources it will need before it begins execution. This prevents holding resources while waiting for others but usually leads to low resource utilisation because resources may be allocated long before they are needed.

- A process must release all currently held resources before requesting additional resources. This avoids hold-and-wait but may lead to starvation or poor performance if processes repeatedly release and reacquire resources.

Eliminating No Preemption

If preemption is allowed, the operating system can forcibly take a resource away from a process and give it to another. Practical difficulties arise: the process may be left in an inconsistent state, and not all resources can be preempted (for example, I/O in progress).

Eliminating Circular Wait

One common scheme to prevent circular wait is to impose a total ordering on all resource types and require that each process requests resources in strictly increasing (or strictly decreasing) order of enumeration. For example, assign numeric IDs to resources. If a process has resource R5, it may request only resources with numbers greater than 5; requests for lower-numbered resources must be denied. This ordering prevents cycles in the resource-wait graph.

Deadlock Avoidance: Banker's Algorithm

Banker's Algorithm is a deadlock-avoidance algorithm, originally proposed by Edsger W. Dijkstra. It assumes that the system knows, in advance, the maximum number of instances of each resource type that each process may request. The algorithm grants a request only if doing so leaves the system in a safe state. A safe state is one in which there exists some sequence of process completions (a safe sequence) such that each process can obtain its maximum required resources and complete.

Inputs to the Banker's Algorithm are:

- Maximum resource requirement for each process (Max).

- Resources currently allocated to each process (Allocation).

- Currently available resources in the system (Available).

Request granting policy - a request from process Pi for some resources is granted only when both of the following hold:

- The request is less than or equal to Pi's remaining need (Request ≤ Need).

- The request is less than or equal to the current Available vector.

If these two checks pass, the system tentatively allocates the resources to Pi and runs the safety algorithm to determine whether the resulting state is safe. If the state is safe, the allocation is made permanent; otherwise, the request is denied and Pi must wait.

Example (illustrative reference)

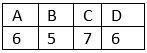

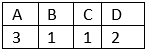

Total resources in the system:

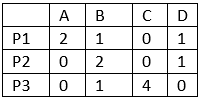

Available system resources are:

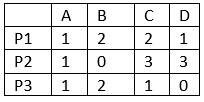

Processes (currently allocated resources):

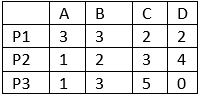

Processes (maximum resources):

Need = Maximum - Allocation. Processes (need resources):

Note: Deadlock prevention is more restrictive than deadlock avoidance. Prevention eliminates the possibility of deadlock by ruling out one of the necessary conditions, whereas avoidance permits more concurrency by requiring additional knowledge and checking for safe states.

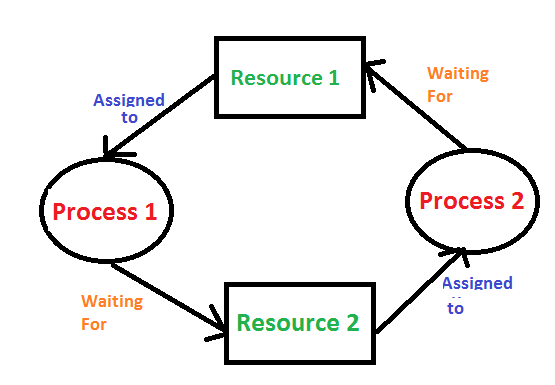

Resource-Allocation Graphs and Wait-For Graphs

A resource-allocation graph (RAG) is a directed graph used to model the allocation of resources to processes. It contains two kinds of nodes: process nodes (P1, P2, ...) and resource nodes (R1, R2, ...). There are two kinds of directed edges:

- An assignment edge from a resource node R to a process node P (R → P) indicates that an instance of R is currently allocated to P.

- A request edge from a process node P to a resource node R (P → R) indicates that P has requested an instance of R and is waiting.

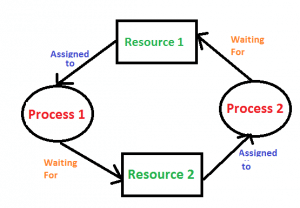

If the RAG contains a cycle and every resource in the cycle has exactly one instance, then a deadlock exists. If a resource type has multiple instances, a cycle in the RAG is a necessary but not sufficient condition for deadlock.

A wait-for graph is a simplified graph where resource nodes are removed and an edge Pi → Pj indicates that process Pi is waiting for a resource currently held by process Pj. The wait-for graph is convenient for deadlock detection in the single-instance case because detecting a cycle in the wait-for graph is equivalent to detecting deadlock.

Deadlock Detection and Recovery

Deadlock Detection

Deadlock detection methods differ depending on whether resource types have single or multiple instances.

- Single-instance resources: Build the resource-allocation graph or wait-for graph. If the graph contains a cycle, deadlock is present. The presence of a cycle is both necessary and sufficient.

- Multiple-instance resources: Cycle detection alone is insufficient; the system may or may not be deadlocked. Detection algorithms for the multiple-instance case use matrices and vectors similar to the Banker's safety algorithm. These algorithms examine the current Allocation, Request (or Need) and Available vectors to determine whether a set of processes are unable to proceed.

In the example above, R1 and R2 have single instances and the graph R1 → P1 → R2 → P2 → R1 (a cycle) confirms a deadlock.

Deadlock Detection Algorithm (multiple instances) - outline

- Let Available be the vector of currently available resources.

- Let Allocation be the matrix of current allocations; Need = Max - Allocation.

- Maintain a Finish array initialised to false for all processes that have non-zero allocation, true for processes with zero allocation.

- Find a process Pi such that Finish[i] is false and Need[i] ≤ Available. If none exists, go to step 6.

- Set Available = Available + Allocation[i]; set Finish[i] = true; repeat step 4.

- If Finish[i] is true for all i, the system is not deadlocked; otherwise, the processes with Finish[i] = false are deadlocked.

Deadlock Recovery

Once a deadlock is detected, the system must recover. Recovery techniques include:

- Process termination: Abort all deadlocked processes, or abort processes one at a time until the deadlock is resolved. After each abort, re-run the detection routine to check whether the deadlock has been eliminated. Choosing which process to abort (the victim selection) may be based on priority, CPU time used, time left to completion, resources held, or a combination of metrics.

- Resource preemption and rollback: Preempt resources from some processes and reassign them so that other processes can complete. Preemption may require rolling back a process to some safe checkpoint. Repeated preemption can lead to starvation of the victim; mechanisms such as priority boosting or increased penalty cost are used to reduce this risk.

Traditional desktop operating systems commonly favour the "ignore" approach or simple restart policies because comprehensive detection and recovery can be expensive in time and complexity. Real-time and high-availability systems, however, often implement detection and recovery strategies.

Related Concepts: Starvation and Livelock

- Starvation (indefinite postponement): A process may be continually denied the resources it needs while other processes proceed, due to scheduling or resource-allocation policies. Starvation is not the same as deadlock; in starvation, some other process is making forward progress.

- Livelock: Processes continuously change their state in response to other processes without making any actual progress (for example, two processes repeatedly changing their resource requests in response to each other). Livelock differs from deadlock because processes are not blocked; they are active but not completing useful work.

Practical Strategies and Trade-offs

When designing or configuring a system, choose a deadlock strategy based on expected workload and system goals:

- Prevention policies often reduce concurrency and resource utilisation but guarantee absence of deadlock.

- Avoidance (for example, Banker's algorithm) permits higher concurrency but requires advance knowledge of maximum demands and incurs runtime checking overhead.

- Detection and recovery allow maximal concurrency but pay the cost of periodic detection and potentially expensive recovery actions.

- Ignoring deadlock is acceptable where deadlocks are extremely rare and the cost of recovery (for example, rebooting) is acceptable compared with continuous overheads of prevention or detection.

Conclusion

Deadlock is an important concept in operating systems that arises from resource contention under certain necessary conditions. Understanding the four necessary conditions, the structure of resource-allocation and wait-for graphs, and the differences between prevention, avoidance and detection/recovery is essential for designing robust systems. Practical systems balance safety, performance and complexity when choosing how to handle deadlocks.

FAQs on Notes: Deadlock

| 1. What is a deadlock in operating systems? |  |

| 2. What are the necessary conditions for a deadlock to occur? |  |

| 3. How can deadlocks be prevented in an operating system? |  |

| 4. What is the difference between deadlock avoidance and deadlock detection? |  |

| 5. How does the Banker's algorithm help in deadlock avoidance? |  |

| Explore Courses for Computer Science Engineering (CSE) exam |  |

| Get EduRev Notes directly in your Google search |  |