Computer Science Engineering (CSE) Exam > Computer Science Engineering (CSE) Notes > Compiler Design > Mind Map: Lexical Analysis

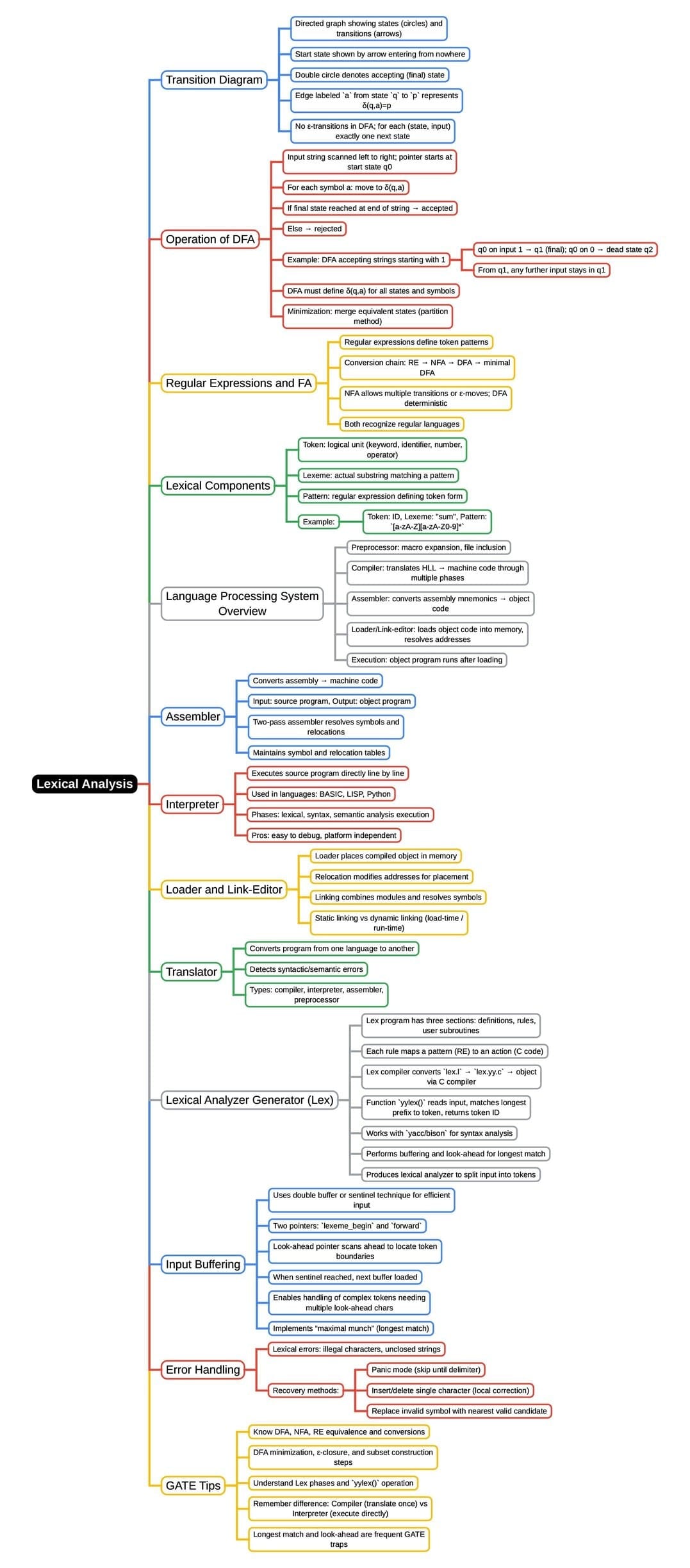

Mind Map: Lexical Analysis

The document Mind Map: Lexical Analysis is a part of the Computer Science Engineering (CSE) Course Compiler Design.

All you need of Computer Science Engineering (CSE) at this link: Computer Science Engineering (CSE)

FAQs on Mind Map: Lexical Analysis

| 1. What is lexical analysis and why is it important in computer science? |  |

Ans.Lexical analysis is the first phase of the compiler design process, where the source code is converted into tokens. It involves breaking down the input text into meaningful symbols or tokens, which can include keywords, identifiers, literals, and operators. This phase is crucial because it simplifies the parsing process by providing a structured representation of the input code, enabling the compiler to understand and process the syntax effectively.

| 2. What are the main components of a lexical analyzer? |  |

Ans.The main components of a lexical analyzer include the input buffer, a finite state machine (FSM), and a symbol table. The input buffer holds the source code being analyzed, while the FSM recognizes patterns in the input to generate tokens. The symbol table stores information about identifiers and their attributes, such as type and scope, facilitating the management of variable names and other symbols throughout the program.

| 3. How does a lexical analyzer differ from a parser? |  |

Ans.A lexical analyzer and a parser serve different functions in the compilation process. The lexical analyzer focuses on breaking down the source code into tokens and identifying the basic elements of the language, while the parser takes these tokens and organizes them into a hierarchical structure, known as a parse tree or syntax tree, based on grammatical rules. Essentially, the lexical analyzer deals with the "words" of the programming language, while the parser deals with the "sentences."

| 4. What are regular expressions and how are they used in lexical analysis? |  |

Ans.Regular expressions are sequences of characters that define search patterns, commonly used for string matching. In lexical analysis, regular expressions are employed to specify the patterns for different token types, such as keywords, operators, and identifiers. The lexical analyzer uses these expressions to recognize tokens in the input source code, allowing it to identify and categorize the various components of the language efficiently.

| 5. Can you explain the process of tokenization in lexical analysis? |  |

Ans.Tokenization is the process of converting the input source code into tokens, which are the smallest units of meaning. During tokenization, the lexical analyzer scans the input stream, identifies valid tokens based on predefined rules (often using regular expressions), and discards irrelevant characters like whitespace and comments. Each identified token is then classified and stored in a structured format, which is later used by the parser for further processing of the code.

| Explore Courses for Computer Science Engineering (CSE) exam |  |

| Get EduRev Notes directly in your Google search |  |

Related Searches

shortcuts and tricks, Mind Map: Lexical Analysis, Extra Questions, Objective type Questions, MCQs, Free, Mind Map: Lexical Analysis, Sample Paper, Exam, Mind Map: Lexical Analysis, Semester Notes, pdf , past year papers, study material, video lectures, mock tests for examination, ppt, Viva Questions, Important questions, Summary, Previous Year Questions with Solutions, practice quizzes;