Data Transfer Cost Reduction

Data transfer cost reduction is one of the most heavily tested cost-optimization areas in the AWS Solutions Architect Associate exam. This topic focuses on understanding how AWS charges for data movement between services, regions, availability zones, and the internet, and how to architect solutions that minimize these costs without sacrificing performance or availability. Mastering these principles directly impacts your ability to select the most cost-effective architecture in scenario-based questions.

Core Concepts

AWS Data Transfer Pricing Model

AWS charges for data transfer based on direction, location, and service involved. Data transfer IN to AWS from the internet is always free, but data transfer OUT from AWS to the internet incurs charges that vary by region and volume. Understanding this asymmetry is critical for exam scenarios.

Key pricing principles:

- Inbound traffic from internet: Free (0 USD per GB)

- Outbound traffic to internet: Charged per GB, tiered pricing (decreases with volume)

- Data transfer between AWS services in the same region: Free when using private IP addresses

- Data transfer between regions: Always charged in both directions

- Data transfer between Availability Zones: Charged at 0.01 USD per GB in each direction (both ingress and egress)

- Data transfer within the same AZ: Free when using private IP addresses

When to Use This

- Exam questions asking which architecture minimizes cost will test your understanding of free vs. paid transfer scenarios

- When comparing multi-region vs. single-region architectures, data transfer costs between regions become the deciding factor

- Scenarios involving CloudFront, S3, or EC2 will test whether you know that same-region transfers via private IPs are free

- Questions about hybrid architectures require knowing that data leaving AWS to on-premises locations is charged as internet egress

CloudFront for Data Transfer Cost Reduction

CloudFront is AWS's content delivery network (CDN) that caches content at edge locations worldwide. Using CloudFront reduces data transfer costs because CloudFront-to-internet transfer rates are lower than EC2/S3-to-internet rates, and data transfer from S3 or EC2 origins to CloudFront edge locations is free when in the same region.

Cost benefits:

- S3 to CloudFront: Free data transfer (no charges for origin fetches)

- CloudFront to internet: Lower per-GB rates than direct S3 or EC2 egress (approximately 30-40% cheaper depending on region)

- Regional edge caches: Reduce repeated origin fetches, lowering both transfer and request costs

- Origin Shield: Additional caching layer that consolidates origin requests, further reducing data transfer from origin

When to Use This

- When the exam presents a scenario with global users accessing static content from S3 or EC2, CloudFront is almost always the correct cost-reduction answer

- If a question mentions "high egress costs" or "large data transfer bills," CloudFront should be your first consideration

- Scenarios involving video streaming, software distribution, or website acceleration directly test CloudFront's cost benefits

- When comparing Direct Connect vs. CloudFront for content delivery, CloudFront wins for internet-facing content distribution

VPC Endpoints for AWS Service Access

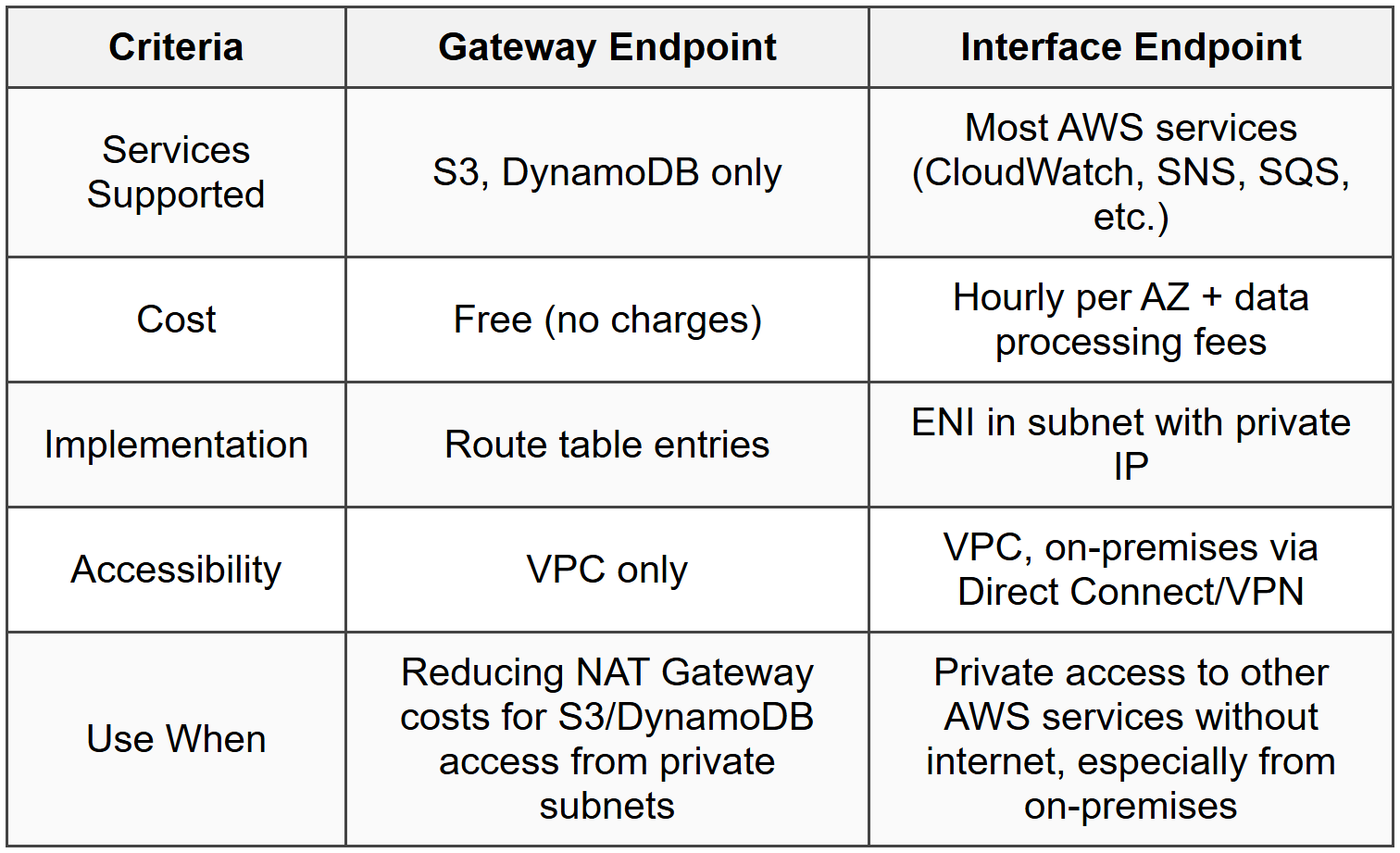

VPC Endpoints allow private connectivity between your VPC and supported AWS services without traversing the internet or requiring a NAT Gateway. There are two types: Gateway Endpoints (for S3 and DynamoDB) and Interface Endpoints (for other AWS services via AWS PrivateLink).

Cost impact:

- Gateway Endpoints (S3, DynamoDB): Completely free, no hourly charges, no data processing charges

- Interface Endpoints: Hourly charge per endpoint (approximately 0.01 USD per hour per AZ) plus data processing charges (approximately 0.01 USD per GB)

- NAT Gateway alternative: Eliminates NAT Gateway charges (0.045 USD per hour + 0.045 USD per GB processed) for S3/DynamoDB access

- Internet Gateway alternative: Avoids public IP addresses and associated data transfer charges when accessing AWS services

When to Use This

- Scenarios describing private subnet instances accessing S3 or DynamoDB should trigger Gateway Endpoint as the answer for zero-cost solution

- When the exam asks how to reduce NAT Gateway costs for AWS service access, Gateway Endpoints are the correct answer

- Questions about securing data in transit without internet exposure while reducing costs point to VPC Endpoints

- Interface Endpoints appear in scenarios requiring private access to services like CloudWatch, SNS, or SQS from on-premises via Direct Connect

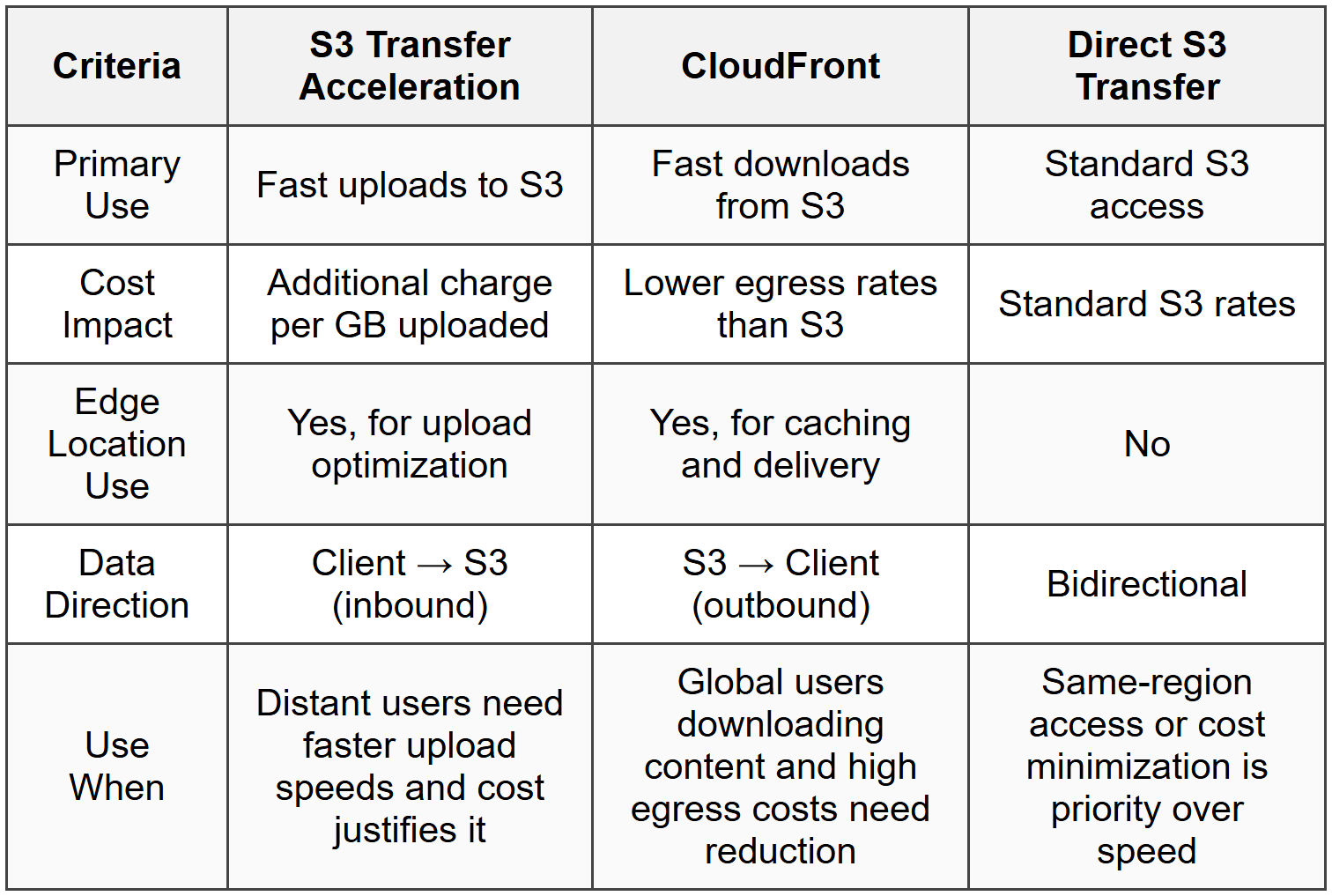

S3 Transfer Acceleration vs. CloudFront vs. Direct Transfer

S3 Transfer Acceleration uses CloudFront edge locations to accelerate uploads to S3 by routing data through optimized network paths. This is distinct from using CloudFront for content delivery (downloads). Understanding when each option is cost-effective is exam-critical.

Cost and use considerations:

- S3 Transfer Acceleration: Additional per-GB charge (varies by distance, 0.04-0.08 USD per GB) on top of standard S3 costs; used only for uploads

- CloudFront with S3 origin: Lower egress costs for downloads; free S3-to-CloudFront transfer

- Direct S3 access: Standard S3 data transfer rates apply; cheapest for same-region access

- Performance requirement: Transfer Acceleration only makes sense when upload speed justifies the additional cost (typically long-distance uploads)

When to Use This

- Exam scenarios describing slow uploads from distant locations to S3 test whether you know Transfer Acceleration is the solution

- Questions asking to minimize download costs for global users point to CloudFront, not Transfer Acceleration

- When the scenario mentions both uploads and downloads, you may need both Transfer Acceleration (upload) and CloudFront (download)

- Cost-optimization questions comparing these three options require knowing Transfer Acceleration adds cost, so it's only justified by performance needs

Regional Data Transfer Optimization

Data transfer between AWS regions incurs charges in both directions. Minimizing cross-region data transfer is a key cost-reduction strategy tested extensively in architecture design questions.

Key strategies:

- Consolidate resources in single region: Keeps data transfer within region (free when using private IPs)

- Use S3 Cross-Region Replication selectively: Only replicate necessary data; consider lifecycle policies to delete unnecessary replicas

- Leverage CloudFront: Single global distribution point reduces need for multi-region deployments

- VPC Peering pricing: Same cost as inter-region data transfer; not a cost-saving measure but enables private connectivity

- Data compression: Compress data before cross-region transfer to reduce GB transferred

When to Use This

- Scenarios describing multi-region architectures with high costs test whether you know cross-region transfer is the likely culprit

- When comparing disaster recovery options, cheaper alternatives like S3 Cross-Region Replication with lifecycle policies beat continuous multi-region writes

- Questions about global applications may test whether CloudFront with a single-region origin is more cost-effective than multi-region deployments

- Exam questions about VPC Peering cost benefits require knowing it doesn't reduce transfer costs, only provides private connectivity

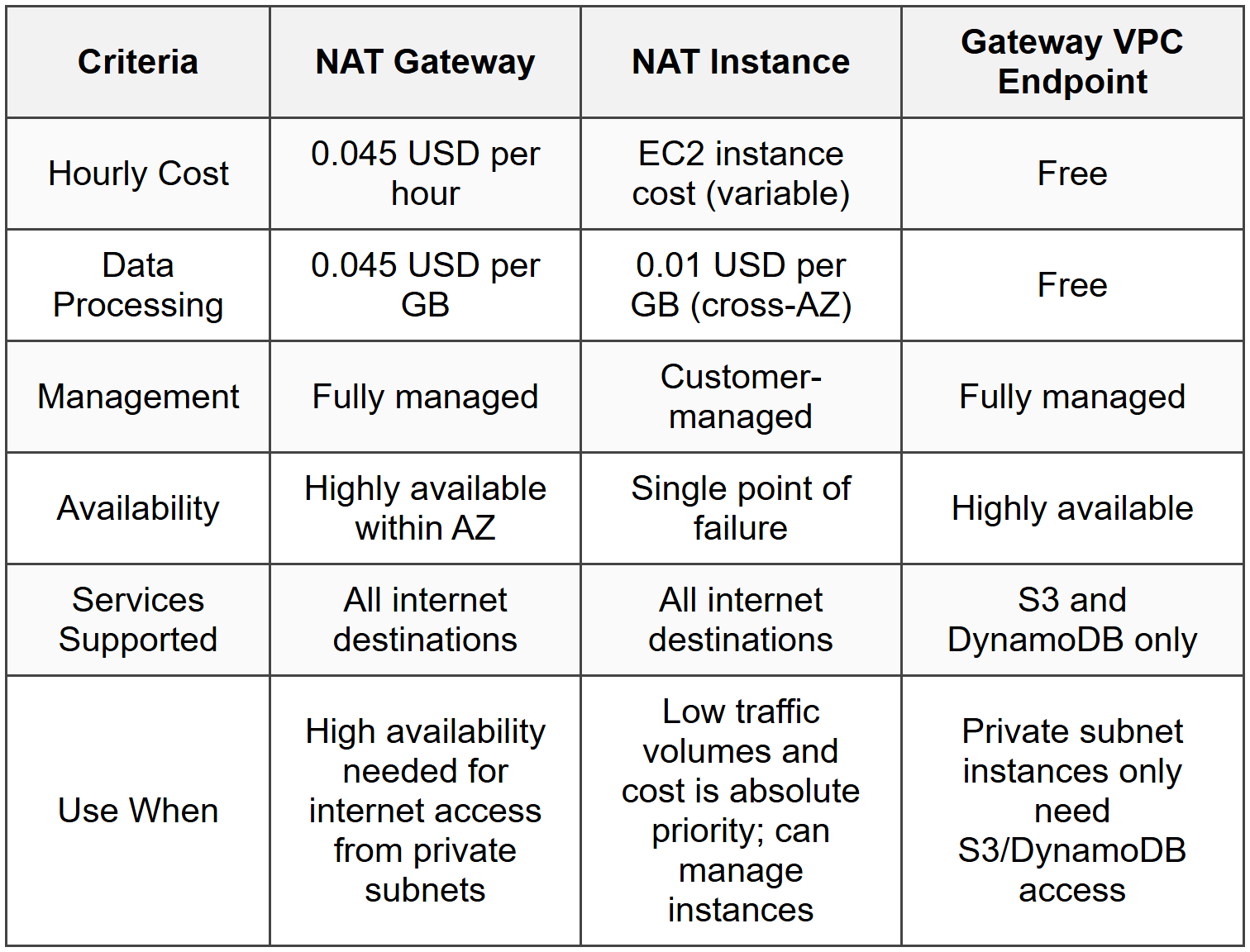

NAT Gateway vs. NAT Instance vs. VPC Endpoints

For private subnet instances needing internet or AWS service access, the choice between NAT Gateway, NAT Instance, and VPC Endpoints significantly impacts data transfer costs.

Cost comparison:

- NAT Gateway: 0.045 USD per hour per AZ + 0.045 USD per GB processed; managed service, highly available within AZ

- NAT Instance: EC2 instance cost (varies by type) + 0.01 USD per GB cross-AZ if sourcing from different AZ; requires management and patching

- Gateway VPC Endpoint: Free for S3 and DynamoDB access; eliminates NAT Gateway/Instance need for these services

- Interface VPC Endpoint: 0.01 USD per hour per AZ + 0.01 USD per GB; cheaper than NAT Gateway for specific AWS service access

When to Use This

- Questions about reducing costs for private instances accessing S3 or DynamoDB should point to Gateway Endpoints as the zero-cost solution

- When the scenario describes high NAT Gateway bills for general internet access, consider whether a NAT Instance with specific instance sizing might be cheaper (though less common in real practice)

- Exam scenarios mentioning both internet access and AWS service access test whether you know to use VPC Endpoints for AWS services and NAT Gateway only for internet

- High-availability requirements favor NAT Gateway despite higher cost; cost-only optimization might favor NAT Instance or VPC Endpoints depending on use case

Cross-AZ Data Transfer

Data transfer between Availability Zones within the same region incurs charges of 0.01 USD per GB in each direction (0.02 USD per GB total for round-trip). While relatively small, this adds up in high-throughput architectures and is tested in cost-optimization scenarios.

Optimization strategies:

- Single-AZ deployment: Eliminates cross-AZ transfer but sacrifices high availability; only viable for non-critical workloads

- Cache locally: Reduce cross-AZ calls by caching data within the same AZ (e.g., ElastiCache in each AZ)

- Read replicas in same AZ: Direct read traffic to same-AZ RDS read replicas to avoid cross-AZ charges

- Load balancer consideration: Application Load Balancer cross-AZ traffic is charged; consider enabling cross-zone load balancing only when needed

- EFS vs. EBS: EFS incurs cross-AZ charges when accessed from different AZs; EBS is AZ-locked (no cross-AZ charges but also no cross-AZ access)

When to Use This

- Exam scenarios describing multi-AZ RDS with high replication costs test whether you understand cross-AZ transfer charges apply

- Questions about Application Load Balancer costs may involve cross-zone load balancing settings and their impact on data transfer charges

- When comparing EFS vs. EBS, cross-AZ access costs for EFS are a key differentiator

- Scenarios asking to optimize costs for read-heavy workloads may test whether you know to use same-AZ read replicas

Direct Connect for Hybrid Architectures

AWS Direct Connect provides dedicated network connections from on-premises to AWS, reducing data transfer costs compared to internet-based transfers for high-volume scenarios. Understanding when Direct Connect is cost-justified versus VPN or internet transfer is exam-essential.

Cost considerations:

- Port hours: Fixed hourly charges based on connection speed (1 Gbps, 10 Gbps, 100 Gbps)

- Data transfer OUT: Lower than standard internet egress (typically 0.02 USD per GB vs. 0.09 USD per GB for internet)

- Data transfer IN: Free (same as internet inbound)

- Break-even point: Generally cost-effective when transferring several TB per month or more

- VPN comparison: VPN has no dedicated port costs but uses standard internet transfer rates; cheaper for low volumes

When to Use This

- Scenarios describing large-scale data migration or continuous high-volume data transfer between on-premises and AWS test Direct Connect knowledge

- When the exam asks about reducing hybrid cloud costs with multi-TB monthly transfers, Direct Connect is the answer

- Questions comparing VPN to Direct Connect for cost require knowing VPN is cheaper for low volumes despite lower reliability

- Exam scenarios involving consistent network performance along with cost reduction point to Direct Connect

S3 Storage Class Selection for Transfer Cost Reduction

While storage classes primarily affect storage costs, they also impact data transfer and retrieval costs. Choosing the right class reduces overall costs when access patterns are considered.

Transfer-related considerations:

- S3 Standard: No retrieval fees; best for frequently accessed data with unpredictable access patterns

- S3 Intelligent-Tiering: Small monthly monitoring fee per object, but no retrieval fees; moves data automatically between tiers

- S3 Standard-IA: Retrieval fee of 0.01 USD per GB; minimum storage duration of 30 days

- S3 One Zone-IA: Same retrieval fee as Standard-IA but data in single AZ only

- S3 Glacier: Higher retrieval costs (varies by retrieval speed: 0.01-0.03 USD per GB); for archival data

- CloudFront with any S3 class: S3-to-CloudFront transfer is free regardless of storage class; retrieval fees still apply for IA/Glacier classes

When to Use This

- Exam scenarios asking about cost optimization for infrequently accessed data delivered via CloudFront test whether you know retrieval fees apply even with CloudFront

- Questions about archival data with occasional access patterns may test Glacier retrieval cost awareness

- When comparing storage classes for cost-sensitive applications with unpredictable access, Intelligent-Tiering avoids retrieval fee surprises

- Scenarios describing frequent access patterns with high CloudFront usage should point to S3 Standard to avoid retrieval fees

Commonly Tested Scenarios / Pitfalls

1. Scenario: A company has a web application serving global users from an S3 bucket in us-east-1. The question states that data transfer costs are extremely high and asks for the most cost-effective solution to reduce egress charges.

Correct Approach: Deploy Amazon CloudFront distribution with the S3 bucket as the origin. CloudFront reduces egress costs because S3-to-CloudFront transfer is free, and CloudFront-to-internet rates are lower than direct S3 egress.

Check first: Confirm the scenario involves outbound data transfer (downloads by users) rather than inbound uploads. CloudFront optimizes downloads, not uploads.

Do NOT do first: Do not immediately suggest S3 Transfer Acceleration, which is for upload optimization and would actually increase costs since it adds per-GB charges on top of standard S3 costs.

Why other options are wrong: Multi-region S3 replication increases costs with cross-region transfer charges; S3 Cross-Region Replication is for disaster recovery or latency reduction, not cost reduction; moving to S3 Glacier introduces retrieval delays and fees without addressing the core egress cost issue for active content.

2. Scenario: An application in private subnets needs to access DynamoDB and download patches from the internet. The question asks how to minimize data transfer costs while maintaining functionality.

Correct Approach: Implement a Gateway VPC Endpoint for DynamoDB (free) and keep the NAT Gateway for internet access. This hybrid approach minimizes costs by using the free endpoint for AWS service access.

Check first: Identify which traffic goes to AWS services versus the internet. Gateway Endpoints only work for S3 and DynamoDB; internet access still requires NAT Gateway or NAT Instance.

Do NOT do first: Do not replace the NAT Gateway entirely without considering that Gateway Endpoints do not provide internet access. The application still needs NAT for patch downloads.

Why other options are wrong: Using only NAT Gateway for both DynamoDB and internet access works but incurs unnecessary charges for DynamoDB (0.045 USD per GB vs. free); using only Gateway Endpoints fails because they cannot provide internet access; using an Internet Gateway in a private subnet violates the private subnet definition and security requirements.

3. Scenario: A multi-AZ RDS database deployment shows high data transfer costs in the billing details. The application is read-heavy with writes occurring only during batch jobs at night. The question asks how to reduce costs.

Correct Approach: Create RDS read replicas in each Availability Zone and configure the application to read from the same-AZ replica. This eliminates cross-AZ data transfer charges for read traffic while maintaining high availability.

Check first: Determine whether cross-AZ charges are coming from database replication, read traffic, or both. The exam will indicate "read-heavy" as a clue that read traffic is the primary cost driver.

Do NOT do first: Do not immediately suggest moving to single-AZ deployment to eliminate cross-AZ charges. While this reduces costs, it sacrifices the high availability that multi-AZ provides, which is typically a non-negotiable requirement in exam scenarios.

Why other options are wrong: Switching to Aurora Global Database increases costs with cross-region replication; implementing caching (ElastiCache) helps performance but does not address the specific cross-AZ database read charges unless the cache is in the same AZ and consistently hit; reducing the number of AZs to just two still incurs cross-AZ charges and reduces availability.

4. Scenario: A company transfers 10 TB of data monthly from on-premises data centers to S3 for backup. Current internet transfer is slow and sometimes unreliable. The question asks for the most cost-effective solution that improves reliability and speed.

Correct Approach: Implement AWS Direct Connect. With 10 TB monthly (approximately 333 GB daily), the combined savings from lower data transfer OUT rates (when accessing data) and improved reliability justify the port hour costs, even though inbound transfer to AWS is free via both internet and Direct Connect.

Check first: Calculate total data volume and consider bi-directional traffic. If the question mentions only uploads (one-way to AWS), remember inbound is always free, so Direct Connect's main benefit is reliability and performance, not cost reduction for inbound-only scenarios. However, backup scenarios often imply occasional restores (outbound), making Direct Connect cost-effective.

Do NOT do first: Do not recommend S3 Transfer Acceleration first. While it can improve upload speed, it adds per-GB charges (0.04-0.08 USD per GB), which for 10 TB monthly adds $400-$800 monthly on top of other costs without the reliability benefits of a dedicated connection.

Why other options are wrong: AWS Snowball is for one-time or periodic bulk transfers, not continuous monthly transfers; VPN over internet improves security but does not reduce costs or significantly improve speed compared to regular internet; Storage Gateway cached mode reduces the amount of data transferred but does not address the speed and reliability requirements stated in the scenario.

5. Scenario: An architecture uses Application Load Balancer (ALB) distributing traffic across three Availability Zones. The question indicates unexpectedly high data transfer charges and asks what configuration change would reduce costs.

Correct Approach: Disable cross-zone load balancing on the ALB if traffic patterns allow. When cross-zone load balancing is enabled, the ALB distributes traffic evenly across all registered targets in all AZs, causing cross-AZ data transfer charges (0.01 USD per GB per direction). Disabling it keeps traffic within the same AZ but may cause uneven load distribution.

Check first: Verify whether cross-zone load balancing is enabled and whether the traffic volume justifies the cross-AZ transfer costs. The exam will typically hint that "traffic is evenly distributed" or "instances in all AZs are utilized equally" as a clue that cross-zone load balancing is active.

Do NOT do first: Do not immediately reduce the number of AZs the ALB uses to minimize cross-AZ traffic. This sacrifices high availability, which is rarely the correct trade-off in AWS exam scenarios unless explicitly stated as acceptable.

Why other options are wrong: Switching to Network Load Balancer does not eliminate cross-AZ charges if cross-zone load balancing remains enabled; moving all instances to a single AZ eliminates cross-AZ costs but destroys fault tolerance; implementing CloudFront in front of ALB is for caching static content and does not address internal ALB-to-instance traffic charges; using private IPs instead of public IPs is already best practice and does not affect cross-AZ charges within the VPC.

Step-by-Step Procedures or Methods

Task: Calculating cost savings from implementing CloudFront for S3 content delivery

- Determine current monthly data transfer OUT from S3 to internet users (e.g., 5 TB = 5,120 GB)

- Find the S3 data transfer OUT rate for your region (e.g., 0.09 USD per GB for the first 10 TB in us-east-1)

- Calculate current S3 egress cost: \( 5120 \times 0.09 = 460.80 \) USD

- Determine CloudFront data transfer OUT rate for your traffic distribution (e.g., 0.085 USD per GB for the first 10 TB in the US)

- Calculate CloudFront egress cost: \( 5120 \times 0.085 = 435.20 \) USD

- Note that S3-to-CloudFront transfer is free (0 USD)

- Calculate monthly savings: \( 460.80 - 435.20 = 25.60 \) USD

- Subtract CloudFront request charges if given (e.g., 0.0075 USD per 10,000 HTTP requests); for low request volumes, this is negligible

- Final monthly savings: approximately 25.60 USD (or about 5-10% depending on region and traffic patterns)

- Note that actual savings increase significantly with higher traffic volumes due to tiered pricing (rates decrease at 10 TB, 50 TB, 150 TB, and 500 TB thresholds)

Task: Determining whether Direct Connect is cost-effective versus internet transfer for hybrid architecture

- Calculate monthly data transfer OUT from AWS to on-premises (data transfer IN to AWS is free regardless of method)

- Determine internet egress cost: monthly GB × regional internet egress rate (e.g., 1000 GB × 0.09 USD = 90 USD)

- Identify Direct Connect port speed needed (typically 1 Gbps for most scenarios) and find hourly port cost (e.g., 0.30 USD per hour for 1 Gbps in us-east-1)

- Calculate monthly Direct Connect port cost: \( 0.30 \times 24 \times 30 = 216 \) USD

- Determine Direct Connect data transfer OUT rate (e.g., 0.02 USD per GB for the first 10 TB)

- Calculate Direct Connect transfer cost: monthly GB × Direct Connect rate (e.g., 1000 GB × 0.02 USD = 20 USD)

- Calculate total Direct Connect cost: port cost + transfer cost = 216 + 20 = 236 USD

- Compare to internet cost: 236 USD (Direct Connect) vs. 90 USD (internet)

- Calculate break-even point: \( \frac{216}{(0.09 - 0.02)} = \frac{216}{0.07} \approx 3086 \) GB monthly (approximately 3 TB)

- Conclusion: Direct Connect becomes cost-effective when monthly data transfer OUT exceeds approximately 3 TB for this example; adjust based on specific regional rates and port costs provided in the exam scenario

Task: Optimizing NAT Gateway costs by implementing VPC Endpoints

- Identify all AWS services accessed from private subnets through the NAT Gateway (examine VPC Flow Logs or billing data)

- Separate services into two groups: S3/DynamoDB (Gateway Endpoint eligible) and other AWS services (Interface Endpoint eligible) and internet destinations (NAT required)

- Calculate current NAT Gateway costs for S3/DynamoDB traffic: data volume × 0.045 USD per GB (processing charge only; hourly charge remains for NAT Gateway availability)

- Create Gateway VPC Endpoints for S3 and DynamoDB (no cost): configure route tables to direct traffic to endpoints instead of NAT Gateway

- Calculate savings: previous S3/DynamoDB NAT processing cost × 100% = full processing cost eliminated for these services

- For other AWS services (e.g., CloudWatch, SNS), calculate current NAT processing cost and compare to Interface Endpoint cost (0.01 USD per hour per AZ + 0.01 USD per GB)

- Determine if Interface Endpoint is cheaper: if data volume is high and hourly cost is acceptable, implement Interface Endpoints

- Calculate Interface Endpoint cost: \( (0.01 \times 24 \times 30 \times \text{number of AZs}) + (\text{data volume in GB} \times 0.01) \)

- For internet-bound traffic, retain NAT Gateway (no VPC Endpoint alternative)

- Final savings: sum of eliminated NAT processing charges for S3/DynamoDB + any savings from Interface Endpoints for other services

Practice Questions

Q1: A company hosts a popular blog on EC2 instances behind an Application Load Balancer in a single AWS region. Users are distributed globally, and the current monthly data transfer OUT to the internet is 8 TB, resulting in high costs. Which solution provides the greatest cost reduction while maintaining performance?

(a) Enable S3 Transfer Acceleration and move all static content to S3

(b) Deploy Amazon CloudFront with the ALB as the origin and enable caching for static content

(c) Implement AWS Direct Connect to reduce data egress charges

(d) Replicate the architecture to multiple AWS regions closer to users

Ans: (b)

CloudFront reduces egress costs because CloudFront-to-internet transfer rates are lower than EC2/ALB-to-internet rates (typically 30-40% cheaper), and CloudFront caches content at edge locations, reducing origin load and transfer volumes. Option (a) is incorrect because S3 Transfer Acceleration is for upload optimization and actually increases costs; option (c) is incorrect because Direct Connect is for on-premises connectivity, not internet users; option (d) is incorrect because multi-region deployments increase costs with cross-region replication and do not inherently reduce egress charges compared to CloudFront's global edge network.

Q2: An application running in private subnets across three Availability Zones requires access to DynamoDB, S3, and external software repositories on the internet. The current architecture uses a NAT Gateway in each AZ. Which combination of changes minimizes data transfer costs while maintaining functionality? (Select TWO)

(a) Implement Gateway VPC Endpoints for DynamoDB and S3

(b) Replace NAT Gateways with NAT Instances

(c) Implement Interface VPC Endpoints for DynamoDB and S3

(d) Consolidate to a single NAT Gateway in one AZ

(e) Move all instances to public subnets with Internet Gateway

Ans: (a) and (d)

Gateway VPC Endpoints for DynamoDB and S3 eliminate all data processing charges for these services (free), removing a significant portion of NAT Gateway costs. Consolidating to a single NAT Gateway eliminates two of the hourly NAT Gateway charges (saving approximately 0.09 USD per hour) while accepting cross-AZ data transfer charges (0.01 USD per GB) for instances in other AZs, which is typically a net savings. Option (b) introduces management overhead and instance costs without guaranteed savings; option (c) is incorrect because Interface Endpoints incur charges (0.01 USD per hour per AZ + data processing) while Gateway Endpoints for these services are free; option (e) violates the private subnet requirement and creates security concerns.

Q3: A financial services company needs to transfer 500 GB of transaction data daily from their on-premises data center to AWS for processing, and retrieve approximately 100 GB of reports daily back to on-premises. This volume is consistent year-round. What is the most cost-effective solution?

(a) Use VPN connection over the internet for all data transfers

(b) Implement AWS Direct Connect with a 1 Gbps connection

(c) Use AWS Snowball with weekly pickup and delivery

(d) Implement S3 Transfer Acceleration for uploads and standard S3 for downloads

Ans: (b)

With 600 GB total daily (approximately 18 TB monthly), and crucially 100 GB daily outbound from AWS (3 TB monthly), Direct Connect becomes cost-effective. The 1 Gbps port costs approximately 216 USD monthly (0.30 USD × 24 × 30), and data transfer OUT via Direct Connect is approximately 0.02 USD per GB (60 USD for 3 TB) versus 0.09 USD per GB via internet (270 USD for 3 TB), saving 210 USD monthly on egress alone, nearly offsetting the port cost, plus reliability benefits. Option (a) has no port cost but higher egress charges and lower reliability; option (c) is for one-time or periodic transfers, not daily continuous transfers; option (d) adds significant cost with Transfer Acceleration charges (0.04-0.08 USD per GB on 500 GB daily = 600-1200 USD monthly) without justification since inbound transfer is already free.

Q4: A company uses RDS Multi-AZ MySQL database in us-east-1 with three read replicas distributed across three Availability Zones. The application is read-heavy with a 90:10 read-to-write ratio. Database-related data transfer costs are higher than expected. What should the Solutions Architect check FIRST to identify the cost issue?

(a) Whether the database is using encrypted connections

(b) Whether the application servers are reading from random read replicas across all AZs

(c) Whether the database instance type is appropriately sized

(d) Whether Multi-AZ replication is causing excessive data transfer

Ans: (b)

If application servers in AZ-A are reading from read replicas in AZ-B or AZ-C, cross-AZ data transfer charges (0.01 USD per GB in each direction) accumulate rapidly with high read volume. This is the most common and impactful cost driver in this scenario and the first thing to check. Option (a) is incorrect because encryption does not affect data transfer charges; option (c) relates to compute costs, not data transfer; option (d) is incorrect because Multi-AZ replication costs are inherent and expected, whereas cross-AZ application-to-read-replica traffic is avoidable by routing each application server to same-AZ read replicas.

Q5: An e-commerce company serves static product images from S3 Standard storage class via CloudFront to global users. The total image library is 20 TB with approximately 500 GB of images accessed frequently (multiple times daily) and the remaining 19.5 TB accessed infrequently (once per month on average). The company wants to optimize costs. What is the MOST cost-effective storage strategy?

(a) Move all images to S3 Intelligent-Tiering to automatically optimize storage costs

(b) Move infrequently accessed images to S3 Standard-IA and keep frequently accessed images in S3 Standard

(c) Move all images to S3 Glacier and accept longer retrieval times

(d) Keep all images in S3 Standard since S3-to-CloudFront transfer is free regardless of storage class

Ans: (b)

While S3-to-CloudFront transfer is indeed free regardless of storage class, storage costs differ significantly. S3 Standard-IA is cheaper for storage (approximately 0.0125 USD per GB vs. 0.023 USD per GB for Standard) and since CloudFront serves the content, the retrieval fees (0.01 USD per GB) apply only when CloudFront fetches from S3 origin (cache misses). For infrequently accessed content with low cache-miss rates, the storage savings exceed occasional retrieval costs. Option (a) incurs monitoring fees per object (thousands or millions of objects = significant cost) without sufficient benefit; option (c) causes unacceptable performance degradation with Glacier retrieval times; option (d) misses the opportunity to reduce storage costs for 97.5% of the data, even though transfer costs remain zero.

Q6: A media company streams video content stored in S3 to users worldwide. The current architecture uses CloudFront for distribution. The billing team notices that despite CloudFront usage, data transfer costs remain high. What is the MOST likely cause?

(a) CloudFront is not caching content properly, causing excessive origin fetches

(b) Users are bypassing CloudFront and accessing S3 directly via public S3 URLs

(c) S3-to-CloudFront data transfer is being charged despite being documented as free

(d) CloudFront request charges are higher than expected for video streaming

Ans: (b)

If users access S3 directly (e.g., via bucket website endpoints or public object URLs), they bypass CloudFront entirely, incurring the higher S3-to-internet egress rates (approximately 0.09 USD per GB vs. CloudFront's lower rates). This is the most common architectural error causing unexpectedly high costs despite CloudFront deployment. Option (a) would increase origin fetch volume but CloudFront egress rates would still apply to user-facing traffic; option (c) is factually incorrect as S3-to-CloudFront transfer in the same region is free; option (d) relates to request charges (per 10,000 requests), which are typically minimal compared to data transfer charges and would not explain high data transfer costs specifically.

Quick Review

- Data transfer IN to AWS from internet is always free; charges apply to data transfer OUT and between regions/AZs

- Same-region data transfer is free when using private IP addresses; cross-AZ costs 0.01 USD per GB each direction

- CloudFront reduces egress costs because S3/EC2-to-CloudFront is free (same region) and CloudFront-to-internet rates are 30-40% lower than direct egress

- Gateway VPC Endpoints for S3 and DynamoDB are completely free; eliminate NAT Gateway processing charges for these services

- Interface VPC Endpoints cost 0.01 USD per hour per AZ + 0.01 USD per GB; cheaper than NAT Gateway (0.045 USD per GB) for high-volume AWS service access

- NAT Gateway costs 0.045 USD per hour + 0.045 USD per GB processed; consolidating to fewer NAT Gateways saves hourly costs but adds cross-AZ charges

- Direct Connect is cost-effective for sustained high-volume transfers (typically > 3-5 TB monthly outbound) due to lower egress rates (approximately 0.02 USD per GB vs. 0.09 USD per GB internet)

- S3 Transfer Acceleration is for upload optimization only and adds 0.04-0.08 USD per GB; do not use for cost reduction

- Cross-region data transfer is always charged in both directions; consolidate resources in single region or use CloudFront instead of multi-region deployments

- RDS read replicas should be accessed from same-AZ application servers to avoid cross-AZ charges; use Route 53 or connection pooling for same-AZ routing