Important Formulas: Probability

What is Probability?

Probability is a quantitative measure of the likelihood that a specified event will occur in a random experiment. If we denote the probability of an event by a variable x, then 1 - x denotes the probability that the event does not occur. Probability provides a way to predict outcomes under uncertainty and is central to analysis in engineering, computer science and many applied fields.

Everyday idea and formal view

In everyday language we say, for example, "It may rain today" to express uncertainty. In probability theory that uncertainty is quantified so that it can be used in calculations, comparisons and decision-making. Formally, probability is attached to events that are subsets of a well-defined sample space.

Basic terms and examples

Probability or Chance: The quantitative measure of the chance of occurrence of a particular event.

Example: Saying "It may rain today" expresses an uncertain event; probability gives a numerical measure of that uncertainty.

Experiment: An operation or process that produces well-defined outcomes.

Random experiment: An experiment where all possible outcomes are known but the exact outcome of a particular trial cannot be predicted in advance.

Examples:

- Tossing a fair coin: Outcomes are Head (H) or Tail (T).

- Throwing an unbiased die: A die has six faces with dots 1 to 6. When rolled, the top face shows a number from 1 to 6, each equally likely.

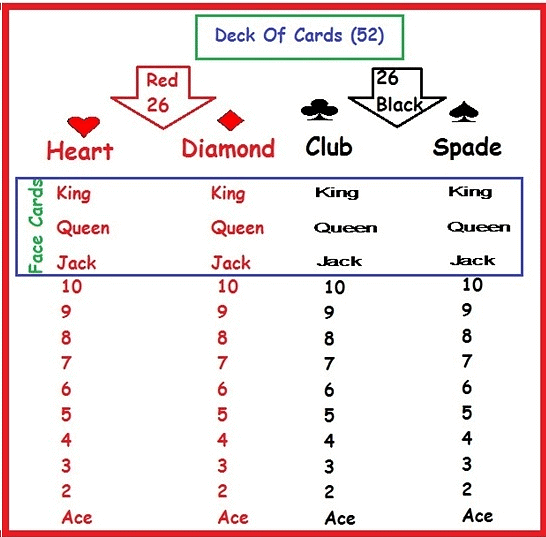

- Drawing a card from a shuffled pack: A standard deck has 52 cards split into four suits, each of 13 cards: Ace, 2-10, Jack, Queen, King. Hearts (♥) and Diamonds (♦) are red; Spades (♠) and Clubs (♣) are black. Kings, Queens and Jacks are face cards.

- Drawing a ball from a bag: Taking one ball at random from a mix of coloured ballsMULTIPLE CHOICE QUESTION

Try yourself: A bag has 5 white marbles, 8 red marbles and 4 purple marbles. If we take a marble randomly, then what is the probability of not getting purple marble?

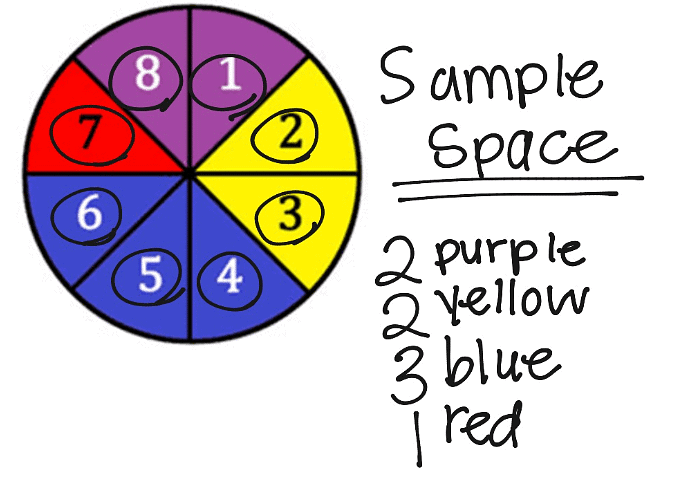

Sample space: The set of all possible outcomes of an experiment. Denoted by S.

Examples:

- Coin toss: S = {H, T}.

- One die: S = {1, 2, 3, 4, 5, 6}.

- Two coins: S = {HH, HT, TH, TT}.

Event: Any subset of the sample space S. Events are written as capital letters A, B, C, etc.

Examples:

- Getting Head when a coin is tossed is an event.

- Getting a 1 when a die is thrown is an event.

Probability of an Event

Let E be an event and S the sample space for a random experiment with equally likely outcomes. The probability of E is defined by the classical formula

P(E) = n(E) / n(S)

where n(E) is the number of outcomes favourable to event E and n(S) is the total number of possible outcomes in S.

Important properties

- P(S) = 1.

- 0 ≤ P(E) ≤ 1 for any event E.

- P(∅) = 0, since the empty event cannot occur.

Solved Examples

(i) A coin is tossed once. What is the probability of getting Head?

Total number of outcomes possible when a coin is tossed = n(S) = 2.

E = event of getting Head = {H}. Hence n(E) = 1.

Therefore P(E) = n(E)/n(S) = 1/2.

(ii) Two dice are rolled. What is the probability that the sum on the top faces will be greater than 9?

Total number of outcomes when two dice are rolled = n(S) = 6 × 6 = 36.

Event E = outcomes where the sum > 9. These ordered pairs are (4,6), (5,5), (5,6), (6,4), (6,5), (6,6).

Count of favourable outcomes n(E) = 6.

Therefore P(E) = n(E)/n(S) = 6/36 = 1/6.

Types of Events

Equally likely events: Events are equally likely if each outcome has the same chance of occurring.

Examples: Coin toss (H and T equally likely); fair die faces 1-6 are equally likely.

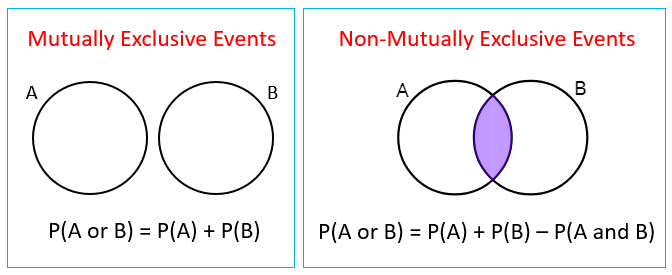

Mutually exclusive events: Two events are mutually exclusive if they cannot occur together; their intersection is empty: A ∩ B = ∅.

Examples: Getting Head and Tail on a single coin toss are mutually exclusive. Faces 1 and 2 on a single die roll are mutually exclusive.

Note: If A = {2, 4, 6} and B = {4, 5, 6}, then A ∩ B ≠ ∅, so A and B are not mutually exclusive.

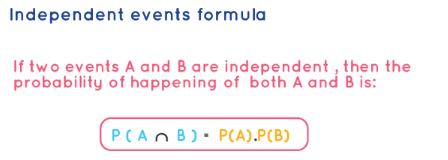

Independent events: Events A and B are independent when occurrence (or non-occurrence) of one does not affect the probability of the other.

Example: Tossing a coin twice. The result of the first toss does not affect the second toss; events "Tail on first toss" and "Tail on second toss" are independent.

Simple events: Events that involve a single outcome.

Examples: Getting Head when a coin is tossed; getting 1 when a die is thrown.

Compound events: Events formed by combining two or more simple events, e.g., joint occurrence across trials.

Example: When two coins are tossed, the event "first toss Head and second toss Tail" is a compound event.

Exhaustive events: A set of events is exhaustive if their union equals the sample space S; all possible outcomes are covered.

Examples: For a coin toss, {H, T} is exhaustive. For two coin tosses there are 4 (= 2^2) exhaustive outcomes: (H,H), (H,T), (T,H), (T,T).

Try yourself: If we throw two coins in the air, then the probability of getting both tails will be:

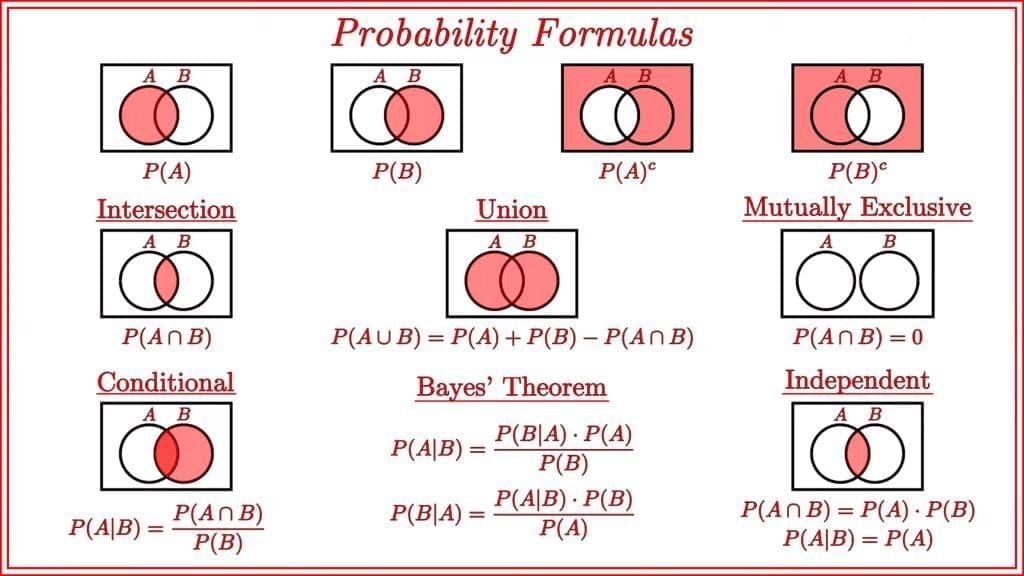

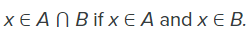

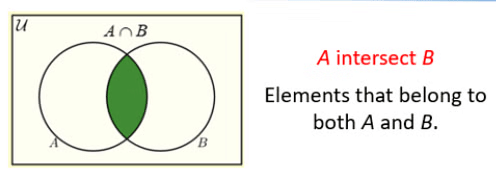

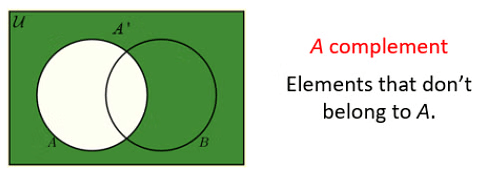

Unions, Intersections and Complements

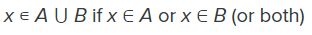

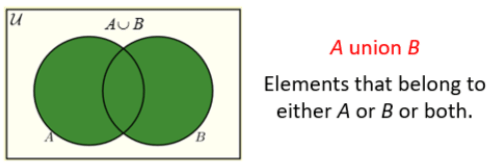

Union (A ∪ B): The union of two sets contains elements that are in A, or in B, or in both.

Intersection (A ∩ B): The intersection contains only elements that are in both A and B.

Complement (A^c or Ā): The complement of A is the set of all outcomes in S that are not in A.

Venn diagrams illustrating union, intersection and complements:

Venn Diagrams and Formulae

Venn Diagrams and Formulae Algebra of Events

Let A and B be events in sample space S. Useful identities (algebra of events):

- Commutative: A ∪ B = B ∪ A; A ∩ B = B ∩ A.

- Associative: (A ∪ B) ∪ C = A ∪ (B ∪ C); (A ∩ B) ∩ C = A ∩ (B ∩ C).

- Distributive: A ∩ (B ∪ C) = (A ∩ B) ∪ (A ∩ C); A ∪ (B ∩ C) = (A ∪ B) ∩ (A ∪ C).

- De Morgan's laws: (A ∪ B)^c = A^c ∩ B^c; (A ∩ B)^c = A^c ∪ B^c.

- Complement rule: P(A^c) = 1 - P(A).

Other Important Calculations

Addition theorem (general):

P(A ∪ B) = P(A) + P(B) - P(A ∩ B).

If A and B are mutually exclusive then P(A ∩ B) = 0 and hence P(A ∪ B) = P(A) + P(B).

Independent events:

If A and B are independent then P(A ∩ B) = P(A)·P(B).

Example: Two dice are rolled. What is the probability of getting an odd number on one die and an even number on the other?

Total number of outcomes for one die = 6.

Let A = event of getting an odd number on one die = {1, 3, 5}; n(A) = 3 so P(A) = 3/6 = 1/2.

Let B = event of getting an even number on the other die = {2, 4, 6}; n(B) = 3 so P(B) = 3/6 = 1/2.

Assuming independence between the two dice, P(A ∩ B) = P(A)·P(B) = (1/2)·(1/2) = 1/4.

Complement rule restated: For any event A, P(A) + P(A^c) = 1.

Odds on an event:

If x outcomes are favourable to event E and y outcomes are unfavourable, then the odds in favour of E are x : y and the odds against E are y : x.

Relating odds to probability: If the probability of E is p, then odds in favour = p : (1 - p).

Example: What are the odds in favour of and against getting a 1 when a die is rolled?

Event E = rolling a 1. Favourable outcomes x = 1. Unfavourable outcomes y = 5.

Odds in favour of E = 1 : 5. Odds against E = 5 : 1.

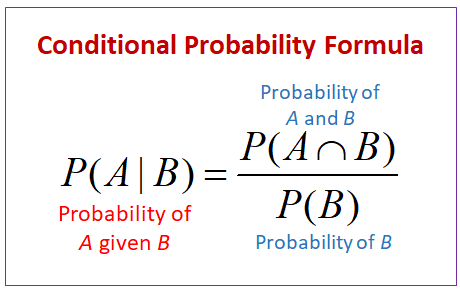

Conditional Probability

For events A and B with P(B) > 0, the conditional probability of A given B has occurred is

P(A | B) = P(A ∩ B) / P(B)

This expresses how probabilities are updated when we know that B has happened.

Example: A bag contains 5 black and 4 blue balls. Two balls are drawn one by one without replacement. What is the probability of drawing a blue ball in the second draw if a black ball was drawn in the first draw?

Sol: Let A be the event "first ball drawn is black" and B be the event "second ball drawn is blue". We need P(B | A).

Total balls initially = 5 black + 4 blue = 9.

After a black ball is drawn on the first draw, remaining balls = 8.

Remaining blue balls = 4 (unchanged by first draw because a black was drawn).

Therefore P(B | A) = 4 / 8 = 1 / 2.

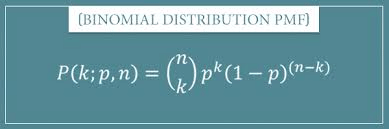

Binomial Probability Distribution

Definition: A binomial experiment is a sequence of n independent trials, each with exactly two possible outcomes commonly called "success" and "failure". The probability of success on each trial is p and is constant across trials. The probability of failure is q = 1 - p.

Conditions for a binomial experiment:

- Each trial has two possible outcomes (success/failure).

- There are a fixed number n of trials.

- Outcomes of trials are independent.

- The probability of success p remains the same in each trial.

Binomial probability formula: The probability of getting exactly r successes in n independent trials is

P(X = r) = C(n, r) · p^r · q^(n-r)

where C(n, r) = nCr = n! / (r! (n - r)!) is the number of ways to choose which r trials are successes, p is the probability of success on a single trial and q = 1 - p is the probability of failure.

Note: If n fair coins are tossed, the sample space size is 2^n. The probability of obtaining exactly r heads is

Summary of key formulas and rules

- Classical probability: P(E) = n(E) / n(S).

- Complement: P(A^c) = 1 - P(A).

- Addition rule: P(A ∪ B) = P(A) + P(B) - P(A ∩ B).

- Multiplication for independent events: P(A ∩ B) = P(A)·P(B) (if independent).

- Conditional probability: P(A | B) = P(A ∩ B) / P(B) provided P(B) > 0.

- Binomial probability: P(X = r) = C(n, r) p^r q^(n-r).

- Odds: odds in favour = x : y where x = favourable outcomes and y = unfavourable outcomes.

FAQs on Important Formulas: Probability

| 1. What are the basic probability formulas I need to memorise for UPSC CSAT? |  |

| 2. How do I use Bayes' theorem to solve probability problems in CSAT? |  |

| 3. What's the difference between independent and dependent events in probability? |  |

| 4. How do I calculate probability using permutations and combinations for CSAT questions? |  |

| 5. What are the important probability distribution formulas I should know for UPSC preparation? |  |