Process Synchronization & Critical Section

Introduction of Process Synchronization

On the basis of synchronization, processes are categorised as one of the following two types:

- Independent Process: A process whose execution does not affect and is not affected by the execution of other processes. For example, a program that does not share any variables, files or other resources with other programs.

- Cooperative Process: A process whose execution may affect or be affected by other processes. Cooperating processes share resources such as files, shared memory, devices or global variables.

Process synchronization problems arise primarily for cooperative processes, because shared resources must be accessed in a controlled manner to maintain data consistency and correctness.

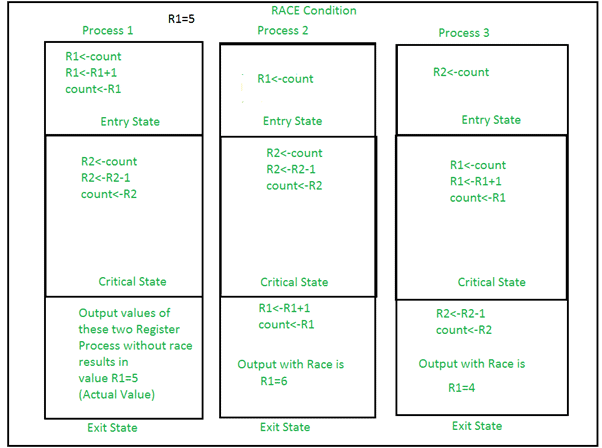

Race Condition

A race condition occurs when two or more processes access shared data concurrently and at least one of them modifies the data, and the final outcome depends on the relative timing (interleaving) of their execution. In other words, the result of execution depends on which process performs an operation first.

Race conditions typically occur inside a critical section: if multiple threads execute the critical section without proper synchronisation, different interleavings can produce different and incorrect results.

Race conditions are prevented by making critical sections behave atomically (appearing as an indivisible operation). Proper synchronisation primitives such as locks, atomic instructions or semaphores are used to prevent races.

Critical Section Problem

A critical section is a fragment of code that accesses shared variables or shared resources and therefore must not be executed by more than one process (or thread) at the same time.

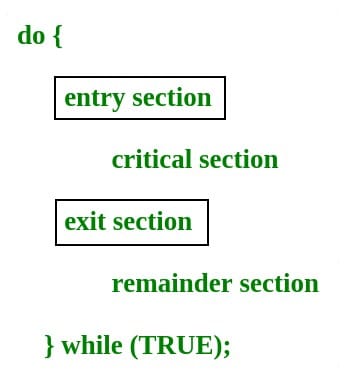

Typical structure of a process that uses a critical section:

acquireLock(); execute critical section; releaseLock(); execute remainder section;

The critical section problem is to design a protocol that each process can use to enter and exit its critical section while satisfying the following requirements.

Any correct solution must satisfy three properties:

- Mutual Exclusion: If a process is executing in its critical section, then no other process is allowed to execute in its critical section.

- Progress: If no process is in the critical section and some processes wish to enter, then the selection of the next process that will enter the critical section must not be postponed indefinitely. Only processes that are not in their remainder section should participate in the decision.

- Bounded Waiting (Fairness): There must be a bound on the number of times other processes are allowed to enter their critical sections after a process has made a request to enter and before that request is granted. This prevents starvation.

Peterson's Solution

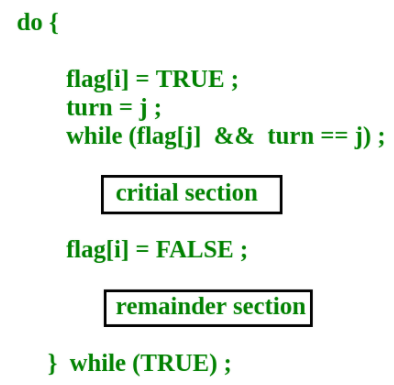

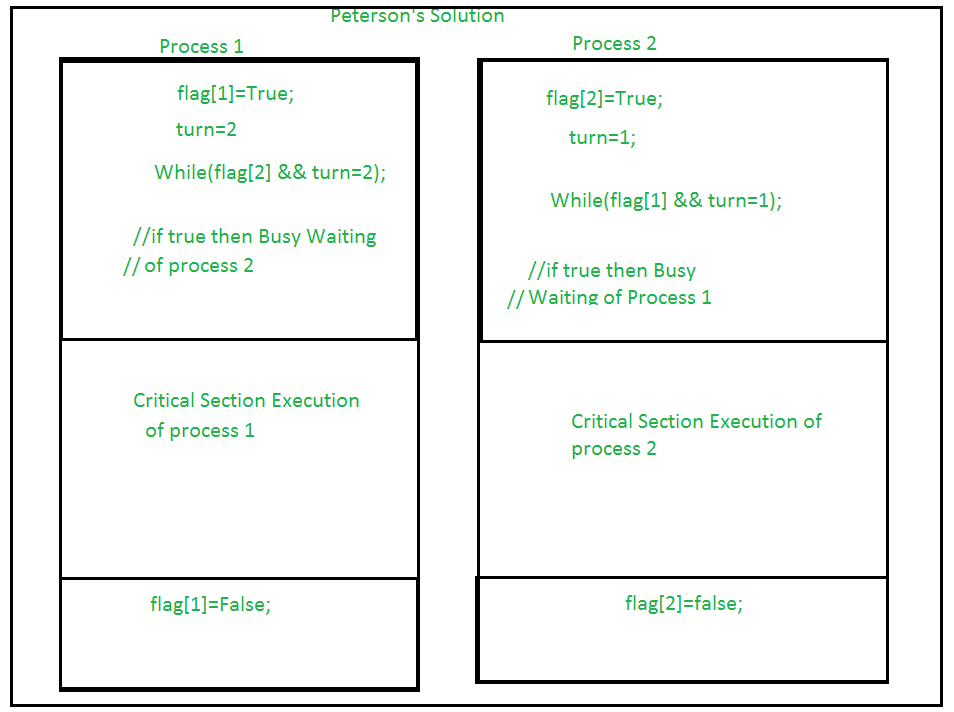

Peterson's Solution is a classical software algorithm that solves the critical section problem for two processes using only shared memory and simple variables.

The solution uses two shared variables:

- boolean flag[i]: indicates that process i wants to enter the critical section. Initially all flags are FALSE.

- int turn: indicates which process's turn it is to enter the critical section; used to resolve conflicts.

Peterson's algorithm ensures:

- Mutual Exclusion: Only one process can be inside the critical section at any time.

- Progress: If no process is in the critical section and some processes wish to enter, one of them will be allowed to enter without unnecessary delay.

- Bounded Waiting: Each process will get a fair chance to enter the critical section within a bounded number of attempts (no starvation).

Disadvantages of Peterson's Solution

- It uses busy waiting (spin waiting) while a process waits to enter the critical section.

- It is limited to two processes in its basic form; generalising to n processes requires more complex algorithms.

Test-and-Set

Test-and-Set is a hardware-provided atomic instruction used for synchronisation. It operates on a shared lock variable that takes values 0 (unlocked) or 1 (locked).

- 0 - Unlocked

- 1 - Locked

Typical usage: before entering the critical section a process atomically tests and sets the lock. If the lock was previously 0, the operation sets it to 1 and returns 0 so the process can enter; otherwise it returns 1 and the process must wait (busy-wait) until the lock becomes free.

Using test-and-set preserves mutual exclusion and progress (in the sense that the machine makes forward progress), but simple test-and-set spinlocks do not guarantee bounded waiting (starvation can occur) and they do not enforce FIFO order.

The enter_CS() and leave_CS() functions to implement critical section of a process are realised using test-and-set instruction as follows:

int TestAndSet(int &lock) { int initial = lock; lock = 1; return initial; } void enter_CS(X) { while test-and-set(X) ; } void leave_CS(X) { X = 0; }

In the above solution, X is a memory location associated with the CS and is initialized to 0. Now, consider the following statements:

I. The above solution to CS problem is deadlock-free

II. The solution is starvation free.

III. The processes enter CS in FIFO order.

IV. More than one process can enter CS at the same time.

Which of the above statements is TRUE?

(a) I

(b) II and III

(c) II and IV

(d) IV

Ans: a

Explanation: The simple test-and-set solution prevents deadlock because the atomic test-and-set ensures mutual exclusion and at least one process can proceed; however, it does not implement any queueing or fairness mechanism, so starvation is possible and FIFO order is not guaranteed.

Semaphores

A semaphore is an integer variable used for process synchronisation that can be accessed only through two atomic operations: wait() (also called P or down) and signal() (also called V or up). Semaphores are implemented at the kernel/hardware level so that these operations are atomic.

Two common types of semaphores:

- Binary Semaphore: Values are 0 or 1. Acts like a mutex lock to provide mutual exclusion. When initialised to 1, a process does wait() to enter its critical section (making the semaphore 0) and signal() on exit (making it 1).

- Counting Semaphore: Can take non-negative integer values and is used to control access to a resource with multiple identical instances. Initialised to the number of available instances; each wait() decrements the count and each signal() increments it.

Semaphores can be used to implement blocking synchronisation: when a process performs wait() on a semaphore that is 0, it is put to sleep (blocked) instead of busy-waiting; when another process calls signal(), a blocked process is awakened.

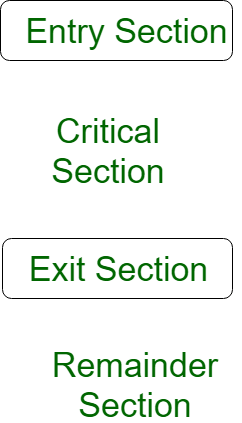

Critical Section in Synchronization

Critical section is a region of code that accesses shared resources and must be executed atomically to prevent data races. Examples of shared resources are shared memory locations, files, I/O ports or kernel data structures.

Uncontrolled concurrent access to such shared resources leads to inconsistent or unpredictable results; hence proper synchronisation is required, especially in kernel-mode programming such as device drivers where direct modification of kernel structures can create serious system errors.

Simple pattern used by threads/processes:

acquireLock(); execute critical section; releaseLock();

Different mechanisms to implement acquire/release include hardware atomic instructions (test-and-set, compare-and-swap), locks, semaphores and higher-level constructs such as monitors and mutexes.

Process Synchronization (Detailed)

Process synchronization coordinates processes that share data so that shared resources are used consistently and safely.

- Independent Process: A process that neither affects nor is affected by other processes while executing. Example: a program that does not share variables, files or databases with other programs.

- Cooperating Process: A process that affects or is affected by other processes during execution, typically because it shares files, variables or databases.

Racing condition

Racing conditionWhen cooperating processes access and modify the same shared resource concurrently, the final result may depend on the order of access; this is a race condition. Synchronisation solves this by allowing only one process to enter and manipulate shared data at a time within a critical section.

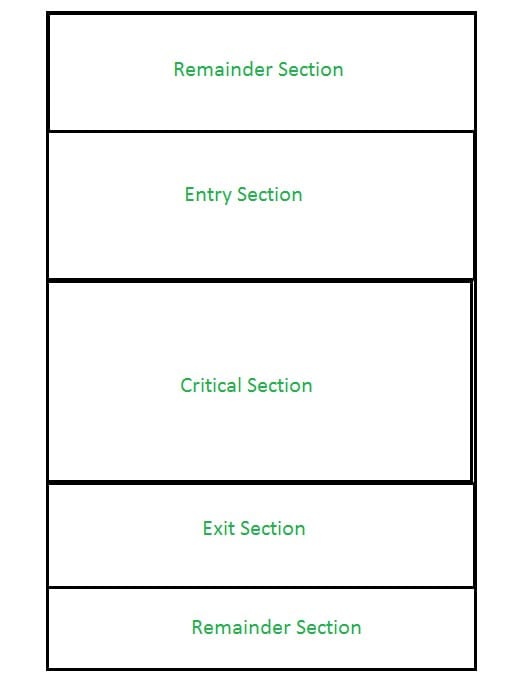

view of CS

view of CS We can view a process that uses a critical section as composed of these regions:

- Entry Section: Code that decides when the process may enter the critical section (contains synchronisation code).

- Critical Section: Code that accesses shared resources and must be executed mutually exclusively.

- Exit Section: Code that runs after the critical section to release locks or signal waiting processes so another process may enter.

- Remainder Section: The remainder of the process code that does not require synchronisation.

The critical section requirements (mutual exclusion, progress and bounded waiting) must be satisfied by any synchronisation scheme.

Approaches to Process Synchronization

Process synchronisation is normally handled by two broad approaches:

- Software Approach:

Software-only algorithms use shared variables and carefully designed protocols to enforce mutual exclusion. Simple schemes for two processes may use temporary variables (for example a turn variable or boolean flag array). These simple schemes often lead to busy waiting and may not satisfy all three critical section requirements.

Peterson's Solution is a classic software approach that uses a pair of flags and a turn variable to guarantee mutual exclusion, progress and bounded waiting for two processes.

Peterson's Algorithm

Peterson's Algorithm - Hardware Approach:

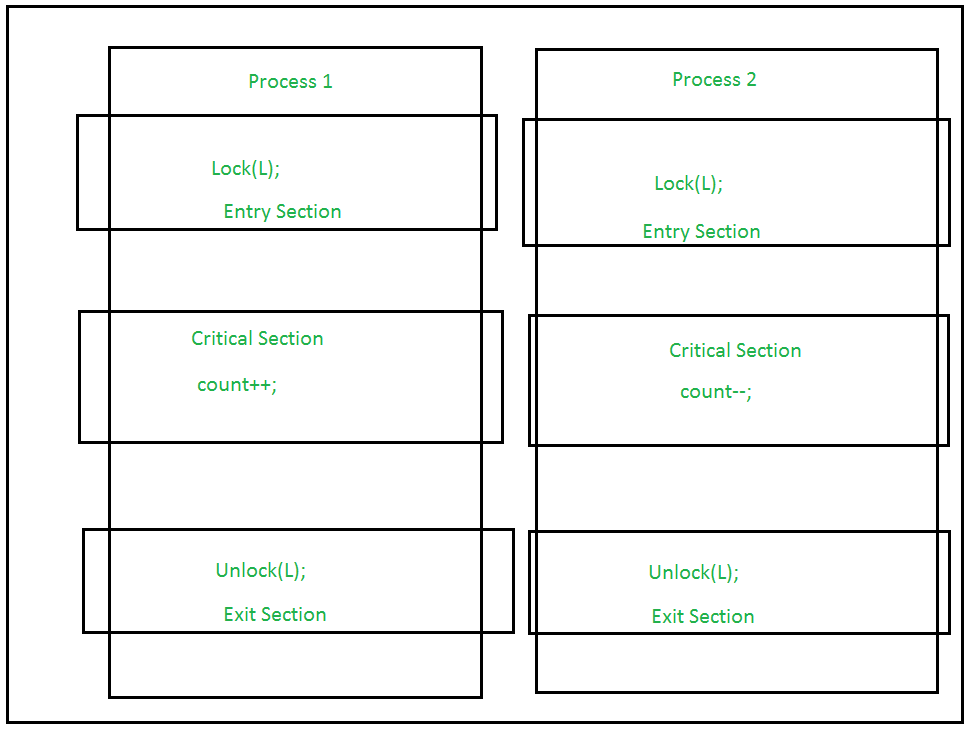

Hardware support provides atomic instructions such as Test-and-Set, Compare-and-Swap (CAS), or Atomic Exchange. These atomic primitives can be used to build locks (spinlocks) and other synchronisation constructs that guarantee mutual exclusion.

Locking is often implemented in the entry section; the lock prevents other processes from entering the critical section. On exit, the lock is released so other waiting processes can proceed.

Lock

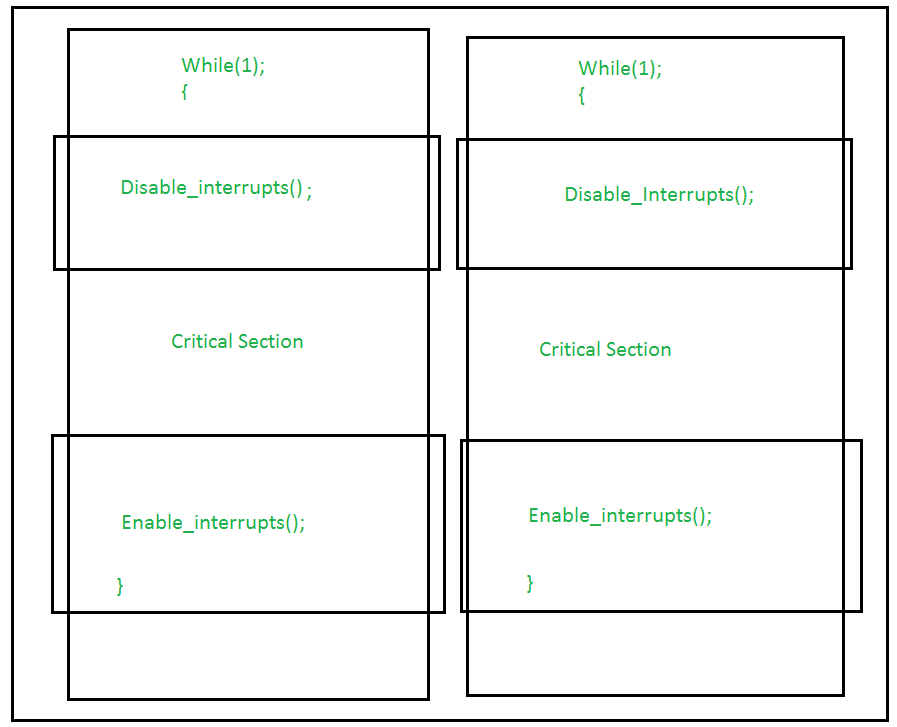

LockUsing interrupts: Disabling interrupts is a simple technique (often used in kernel code on uniprocessor systems) to prevent context switches while executing a critical section. When interrupts are disabled a context switch cannot occur; therefore no other process can run and disturb the critical section. This approach is easy to implement but unsuitable for multiprocessor systems and long critical sections because it prevents the CPU from servicing interrupts.

Interrupts

Interrupts

Test-and-Set Operation: A hardware atomic primitive that returns the old value of a lock and sets it to 1 in one indivisible operation. Because the operation is atomic, no two processors will both see the lock as free; one will succeed and the others will see it as set. Other similar atomic operations are Compare-and-Swap and Fetch-and-Add. These primitives are the building blocks for spinlocks, mutexes and other synchronisation mechanisms.

Additional Notes and Common Examples

Busy waiting (spinlocks) is simple and can be efficient when locks are held for a very short duration or on multiprocessor systems where the waiting thread can spin while another processor releases the lock. However, busy waiting wastes CPU cycles and is unsuitable when locks may be held for longer periods.

Blocking synchronisation (sleep/wakeup) using semaphores or condition variables avoids busy waiting by putting waiting threads to sleep and waking them when the resource becomes available. This is usually preferred in general-purpose systems.

Producer-Consumer (bounded buffer) example (brief): Use one counting semaphore to track the number of full slots, another counting semaphore to track the number of empty slots, and a mutex (binary semaphore) to protect access to the buffer. This combination enforces mutual exclusion, correct resource counting and avoids busy waiting for resources.

Summary

Process synchronisation ensures correct, race-free access to shared resources. The critical section problem requires solutions that provide mutual exclusion, progress and bounded waiting. Solutions may be software-only (Peterson, Dekker, Lamport's Bakery algorithm) or hardware-assisted (test-and-set, compare-and-swap). Semaphores provide a powerful, general mechanism for signalling and resource counting, while higher-level constructs (mutexes, monitors, condition variables) simplify concurrent programming by encapsulating synchronisation patterns.

FAQs on Process Synchronization & Critical Section

| 1. What is process synchronization? |  |

| 2. What is meant by critical section in process synchronization? |  |

| 3. What are the common problems that can occur in process synchronization? |  |

| 4. What are the different process synchronization techniques? |  |

| 5. How does process synchronization impact the performance of a system? |  |

| Explore Courses for Computer Science Engineering (CSE) exam |  |

| Get EduRev Notes directly in your Google search |  |