I/O scheduling

How are I/O operations performed?

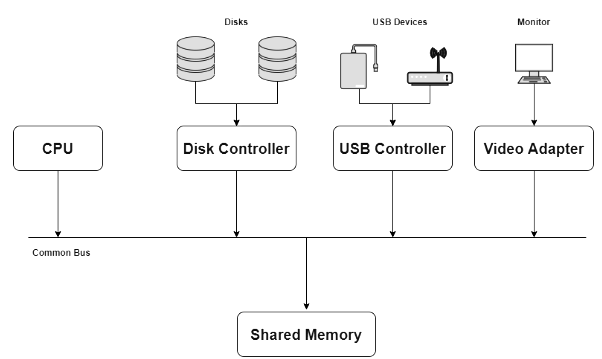

The operating system contains a portion of kernel code dedicated to managing input/output (I/O). This code improves the reliability and performance of the system by coordinating requests from processes, device drivers, and hardware controllers. A typical computer system contains one or more CPUs and several device controllers connected by a system bus. Device controllers present an abstraction to the OS; device drivers implement that abstraction and provide the software interface to I/O devices. The OS and device drivers mediate access to shared resources such as device registers and main memory, and they handle data transfer, buffering and error reporting.

Common Bus Controller

Common Bus ControllerI/O requests in operating systems

An I/O operation usually follows this logical flow:

- A process issues an I/O request via a system call (for example, read or write).

- The request is handled by the kernel and routed to the appropriate device driver.

- If direct memory access (DMA) or a dedicated I/O channel is used, the device controller and DMA hardware perform the data transfer directly to/from memory; the CPU is freed for other work.

- The device controller signals completion to the OS, commonly with an interrupt; the device handler then notifies the requesting process (waking it if it was blocked).

To manage many concurrent requests and devices efficiently, the OS organises I/O management into cooperating components. Typical divisions are the I/O traffic controller, the I/O scheduler, and the I/O device handler. Each performs a distinct role:

- I/O traffic controller: Keeps track of the status of devices, control units and communication channels; decides path selection and resource allocation at the hardware/channel level.

- I/O scheduler: Applies policies to decide the order in which pending I/O requests are served, balancing throughput, fairness and latency.

- I/O device handler: Handles device interrupts, manages the mechanics of individual transfers, and executes the low-level steps required to start or complete a request.

I/O scheduling in operating systems

I/O scheduling organises the order in which pending I/O requests are dispatched to devices. The goals of I/O scheduling typically include:

- Minimising mechanical movement (for rotating disks, minimising seek distance reduces latency).

- Maximising throughput (requests completed per unit time).

- Reducing average response time and its variance (predictable performance).

- Providing fairness and avoiding starvation of low-priority requests.

I/O scheduling differs from CPU scheduling in one important practical way: once a channel program or device transfer has been started, it is usually not preempted. Device-level operations tend to be short (tens to a few hundreds of milliseconds for traditional disks) and the hardware completes the transfer; the scheduler normally selects the next request only when the device becomes free. Modern systems, however, may support prioritisation (so higher-priority I/O requests can be served before lower-priority ones when possible) and sophisticated queues for different classes of requests.

I/O traffic controller

The traffic controller is responsible for mapping requests onto physical paths and for managing shared communication resources. Its main tasks are:

- Check whether at least one path to the device is available.

- If multiple paths exist, select which path to use for the request.

- If all paths are occupied, predict or determine which path will become available soonest and queue requests accordingly.

I/O scheduler

The I/O scheduler maintains queues of pending requests and implements policies to decide service order. Under heavy load the scheduler must choose which request to serve next so as to optimise the chosen metric (seek time, latency, fairness, etc.). Typical techniques used by schedulers include multiple queues (for priority levels or device classes), request merging (combine nearby requests to reduce movement), and reordering seeks to reduce head travel for rotating disks.

I/O device handler

The device handler interfaces with hardware interrupts and the device driver to start and finish transfers. It coordinates the actual movement of data, updates device and request status, and invokes the scheduler when a device becomes free. The choice of scheduling algorithm is central to the device handler's behaviour for block devices such as disks.

Common I/O scheduling algorithms

Each algorithm below attempts to improve one or more of the scheduling goals (seek length, response time, fairness). A short description, typical behaviour and common trade-offs are given for each.

- First-Come First-Serve (FCFS)

Requests are served in arrival order. It is simple to implement and fair in the sense of preserving arrival order. FCFS can produce high seek times if requests are distributed widely on the disk; overall response time may be poor under heavy or adversarial workloads.

Advantages: simplicity and fairness. Disadvantages: potentially large average seek and response times.

- Shortest Seek Time First (SSTF)

SSTF chooses the pending request whose target track is closest to the current head position. The effect is analogous to Shortest Job First in CPU scheduling: requests that are close are favoured, reducing total arm movement.

Advantages: typically lower total seek than FCFS. Disadvantages: can cause starvation for requests far from frequently accessed regions (those ''out of the way'').

- SCAN (Elevator algorithm)

The head moves in one direction servicing all requests until it reaches the end, then reverses direction and services requests on the return trip. The head behaves like an elevator: it sweeps back and forth across the disk.

Advantages: better overall fairness than SSTF and reduced variance in response time; it bounds wait times in practice. Disadvantages: can still produce variable wait times depending on request distribution.

- LOOK (Elevator variant)

LOOK is a refinement of SCAN. Instead of going all the way to the outermost tracks, the head reverses direction at the last pending request in its path; it "looks" ahead and only travels as far as needed.

Advantages: avoids unnecessary travel to empty regions of the disk, typically reducing service time versus SCAN. Disadvantages: similar to SCAN in other respects; still possible to favour certain regions.

- Other variations of SCAN

- N-Step Scan

Pending requests are partitioned into groups of at most N requests. The scheduler services one group at a time using SCAN; new requests arriving during a group's service are placed into the following group. This reduces the chance of starvation by bounding how long newly arriving requests must wait before being considered.

- C-SCAN (Circular SCAN)

C-SCAN services requests in one direction only. When the head reaches the last track in that direction, it quickly returns to the first track without servicing requests on the return; service then resumes in the same direction. This provides a more uniform wait time across tracks because every request sees at most one full sweep's worth of delay.

- C-LOOK

C-LOOK is an optimized version of C-SCAN. The head services requests in one direction and, instead of travelling to the physical end of the disk, jumps back only to the lowest-numbered pending request and resumes. This avoids unnecessary travel across empty track ranges and reduces total movement compared with C-SCAN.

- N-Step Scan

Design criteria and trade-offs

When selecting an I/O scheduling policy the OS designer balances several criteria:

- Throughput: maximise the number of completed I/O operations per unit time.

- Latency / Response time: minimise average time from request issuance to completion.

- Variance: reduce variation in response times for predictability.

- Fairness / Starvation avoidance: ensure low-priority requests are not indefinitely delayed.

- Workload characteristics: random versus sequential access patterns favour different algorithms (e.g., SSTF/LOOK often benefit mixed workloads; C-SCAN gives more uniform waiting for uniformly distributed requests).

Practical considerations

Modern storage hardware and system designs change how scheduling is applied:

- Solid-state drives (SSDs) have negligible seek time compared with magnetic disks, so many traditional disk scheduling heuristics become less important; throughput and fairness, and flash wear-level considerations, take precedence.

- Hardware features such as NCQ (Native Command Queuing) on disks allow the controller to reorder requests; the OS scheduler must cooperate with or defer to device-level reordering.

- Block request merging and elevator-like scheduling are often performed at multiple layers (file system, block layer, device driver, controller) and careful coordination avoids redundant reordering.

- Real-time systems or high-priority workloads may require strict priority scheduling or guaranteed bounded-latency schemes.

Summary

The OS manages I/O by coordinating device drivers, controllers and schedulers. I/O scheduling aims to reduce mechanical movement (for disks), improve throughput and response time, and provide fairness. A range of algorithms-FCFS, SSTF, SCAN, LOOK and their variants such as C-SCAN and C-LOOK-exist to meet different workload needs. The choice of algorithm depends on device characteristics and application requirements: what is optimal for a rotating disk may be unnecessary for flash storage. Understanding these algorithms and their trade-offs is essential for designing and tuning system performance.

FAQs on I/O scheduling

| 1. What's the difference between FCFS and SSTF disk scheduling algorithms? |  |

| 2. Why does the elevator algorithm reduce head movement compared to other I/O scheduling methods? |  |

| 3. How do I/O scheduling algorithms affect overall system performance and throughput? |  |

| 4. What causes starvation in I/O scheduling and which algorithms prevent it? |  |

| 5. What's the practical difference between SCAN and C-SCAN disk scheduling for real applications? |  |

| Explore Courses for Computer Science Engineering (CSE) exam |  |

| Get EduRev Notes directly in your Google search |  |